Here is a summary of some of the more basic instructions for the kafka test I installed in the CDH.

1. Related Basic Contents

Each host in the Kafka cluster runs a server called a proxy that stores messages sent to the subject and serves consumer requests.

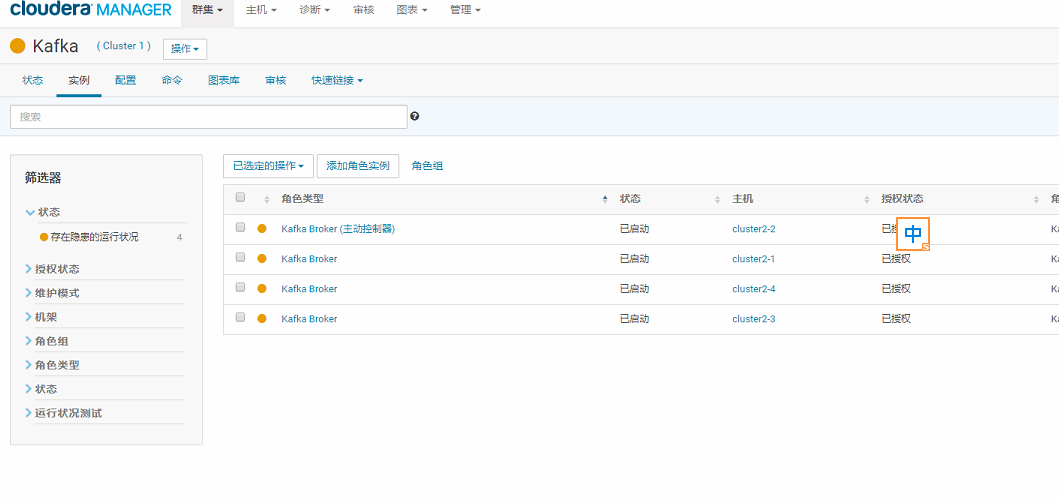

First, look at the instance information of the server installing kafka:

Be careful:

Then the instructions for normal Kafka are:. /bin/kafka-topics.sh --zookeeper cluster 2-4:2181....

However, Kafka installed with CDH does not need to write out this. /bin/kafka-topics.sh section entirely.Just writing kafka-topics directly is an important difference, so pay special attention when installing Kafka using CDH.

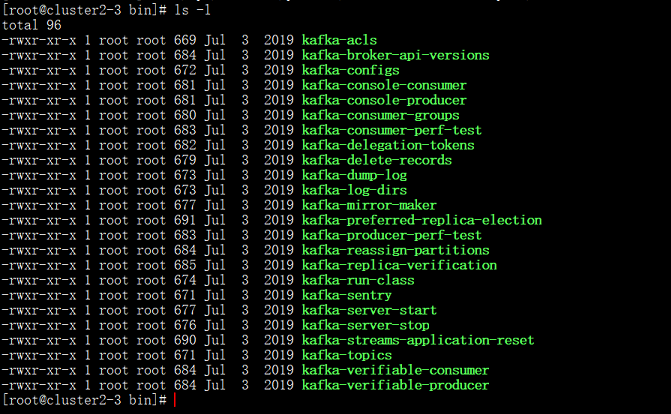

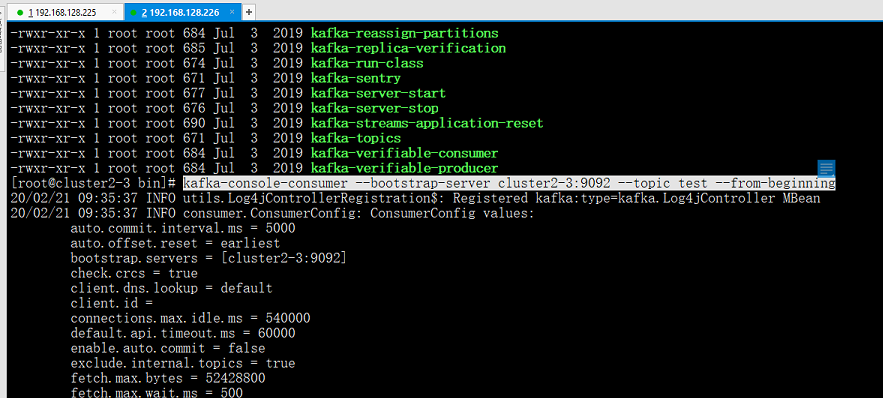

What instructions can you see in this path?

/opt/cloudera/parcels/KAFKA-4.1.0-1.4.1.0.p0.4/bin

2. topic Theme Use

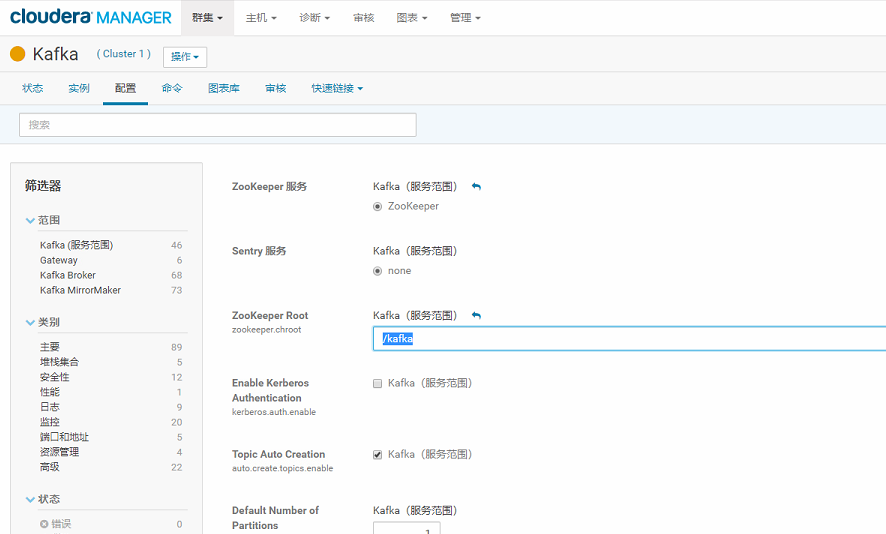

Next, to test the top directive, let's first look at the scope of the Kafka service for this ZooKeeper Root configured in the CDH: "/kafka".

So the format of instructions we use top should be similar:

kafka-topics --zookeeper cluster2-4:2181/kafka ......

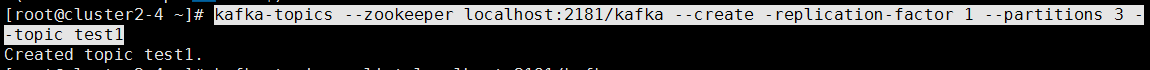

A. Create a topic called test (Topic):

kafka-topics --zookeeper cluster2-4:2181/kafka --create -replication-factor 1 --partitions 3 --topic test

Or

If the above ZooKeeper Root's Kafka service scope is: "/".The Create Theme directive here changes to:

kafka-topics --zookeeper cluster2-4:2181 --create --replication-factor 1 --partitions 3 --topic test

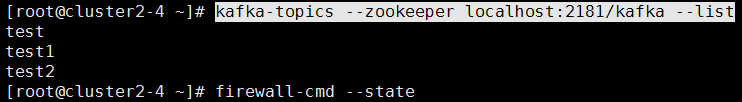

B. Query topic s that already exist:

kafka-topics --zookeeper localhost:2181/kafka --list

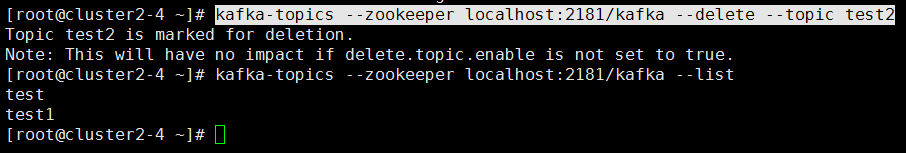

C. Delete the created topic:

kafka-topics --zookeeper localhost:2181/kafka --delete --topic test2

Expand:

Topic *** is marked for deletion is output if deleted directly here.

Method 1: Modify the kafaka configuration file server.properties, add delete.topic.enable=true, restart kafka, and then delete the topic directly from the Kafka command line.

Method 2: Delete the topic from the command line:. /bin/kafka-topics.sh --delete --zookeeper {zookeeper server} --topic {topic name}

_Because server.properties in the kafaka configuration file is not configured with delete.topic.enable=true, deletion is not really deleted at this time, it just marks top as marked for deletion. You can view all topics by command:. /bin/kafka-topics --zookeeper {zookeeper server}--list

Method 3: If you really want to delete it, you need to log in to the zookeeper client:

zookeeper-client

_Find the directory where topic is located:

ls /kafka/brokers/topics

Execute the command, and the top is completely deleted:

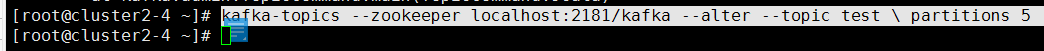

rmr /kafka/brokers/topics/{topic name}D. Modify the number of topic partitions:

kafka-topics --zookeeper localhost:2181/kafka --alter --topic test \ partitions 5

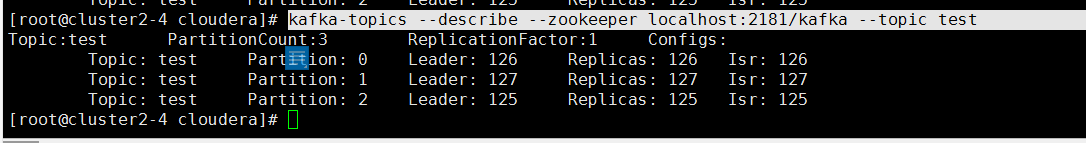

E. View topic details:

kafka-topics --describe --zookeeper localhost:2181/kafka --topic test

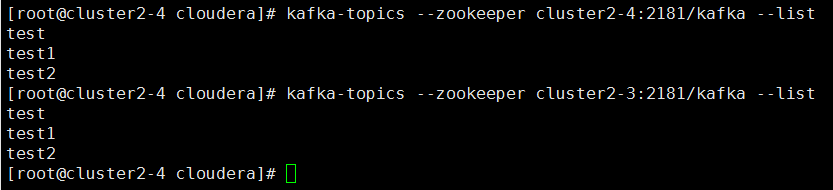

Here we can also test if the distribution is connected properly:

kafka-topics --zookeeper cluster2-4:2181/kafka --list kafka-topics --zookeeper cluster2-3:2181/kafka --list

You can see that in 2-4 this server, we can get uniform information by entering cluster2-4:2181/kafka and cluster2-3:2181/kafka later.

topic directive parameters:

Option Description

------ -----------

--alter Alter the number of partitions,

replica assignment, and/or

configuration for the topic.

--bootstrap-server <String: server to REQUIRED: The Kafka server to connect

connect to> to. In case of providing this, a

direct Zookeeper connection won't be

required.

--command-config <String: command Property file containing configs to be

config property file> passed to Admin Client. This is used

only with --bootstrap-server option

for describing and altering broker

configs.

--config <String: name=value> A topic configuration override for the

topic being created or altered.The

following is a list of valid

configurations:

cleanup.policy

compression.type

delete.retention.ms

file.delete.delay.ms

flush.messages

flush.ms

follower.replication.throttled.

replicas

index.interval.bytes

leader.replication.throttled.replicas

max.message.bytes

message.downconversion.enable

message.format.version

message.timestamp.difference.max.ms

message.timestamp.type

min.cleanable.dirty.ratio

min.compaction.lag.ms

min.insync.replicas

preallocate

retention.bytes

retention.ms

segment.bytes

segment.index.bytes

segment.jitter.ms

segment.ms

unclean.leader.election.enable

See the Kafka documentation for full

details on the topic configs.It is

supported only in combination with --

create if --bootstrap-server option

is used.

--create Create a new topic.

--delete Delete a topic

--delete-config <String: name> A topic configuration override to be

removed for an existing topic (see

the list of configurations under the

--config option). Not supported with

the --bootstrap-server option.

--describe List details for the given topics.

--disable-rack-aware Disable rack aware replica assignment

--exclude-internal exclude internal topics when running

list or describe command. The

internal topics will be listed by

default

--force Suppress console prompts

--help Print usage information.

--if-exists if set when altering or deleting or

describing topics, the action will

only execute if the topic exists.

Not supported with the --bootstrap-

server option.

--if-not-exists if set when creating topics, the

action will only execute if the

topic does not already exist. Not

supported with the --bootstrap-

server option.

--list List all available topics.

--partitions <Integer: # of partitions> The number of partitions for the topic

being created or altered (WARNING:

If partitions are increased for a

topic that has a key, the partition

logic or ordering of the messages

will be affected

--replica-assignment <String: A list of manual partition-to-broker

broker_id_for_part1_replica1 : assignments for the topic being

broker_id_for_part1_replica2 , created or altered.

broker_id_for_part2_replica1 :

broker_id_for_part2_replica2 , ...>

--replication-factor <Integer: The replication factor for each

replication factor> partition in the topic being created.

--topic <String: topic> The topic to create, alter, describe

or delete. It also accepts a regular

expression, except for --create

option. Put topic name in double

quotes and use the '\' prefix to

escape regular expression symbols; e.

g. "test\.topic".

--topics-with-overrides if set when describing topics, only

show topics that have overridden

configs

--unavailable-partitions if set when describing topics, only

show partitions whose leader is not

available

--under-replicated-partitions if set when describing topics, only

show under replicated partitions

--zookeeper <String: hosts> DEPRECATED, The connection string for

the zookeeper connection in the form

host:port. Multiple hosts can be

given to allow fail-over. 3. Testing producer to produce data and consumer consumption data

After we created topic before, here's a test of how to use kafka-console-producer and kafka-console-consumer in Kafka to produce data and consume data on the other end.

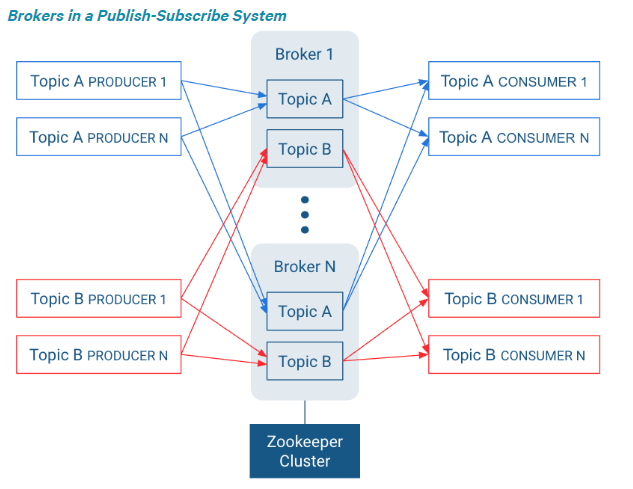

You also need to understand the proxy structure in the publish-subscribe system here:

producer generates data into Topic, and consumer consumes data from Topic to consume.

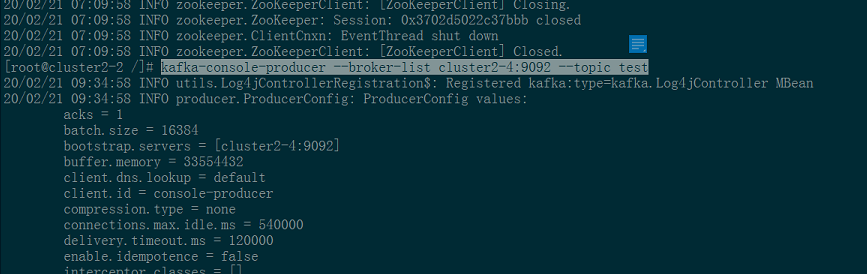

- Start producer first:

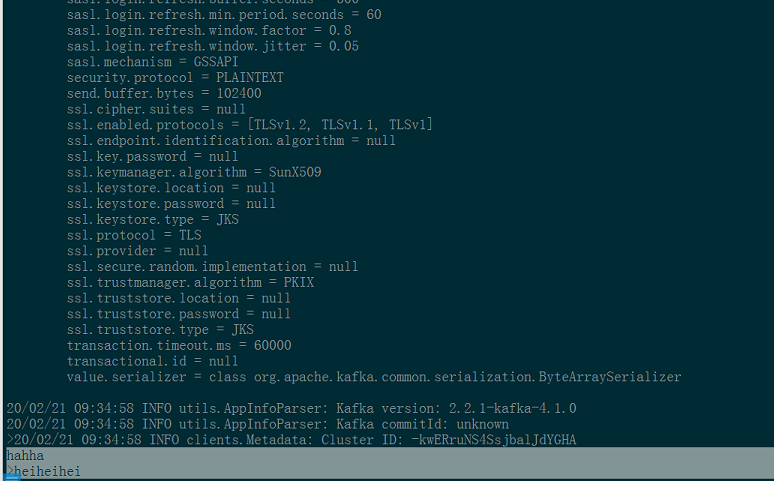

kafka-console-producer --broker-list cluster2-4:9092 --topic test

- Enter the data here and it will be uploaded to the / kafka/broker/test topic in zookeeper.

Instruction parameters for the kafka-console-producer producer:

Option Description

------ -----------

--batch-size <Integer: size> Number of messages to send in a single

batch if they are not being sent

synchronously. (default: 200)

--broker-list <String: broker-list> REQUIRED: The broker list string in

the form HOST1:PORT1,HOST2:PORT2.

--compression-codec [String: The compression codec: either 'none',

compression-codec] 'gzip', 'snappy', 'lz4', or 'zstd'.

If specified without value, then it

defaults to 'gzip'

--help Print usage information.

--line-reader <String: reader_class> The class name of the class to use for

reading lines from standard in. By

default each line is read as a

separate message. (default: kafka.

tools.

ConsoleProducer$LineMessageReader)

--max-block-ms <Long: max block on The max time that the producer will

send> block for during a send request

(default: 60000)

--max-memory-bytes <Long: total memory The total memory used by the producer

in bytes> to buffer records waiting to be sent

to the server. (default: 33554432)

--max-partition-memory-bytes <Long: The buffer size allocated for a

memory in bytes per partition> partition. When records are received

which are smaller than this size the

producer will attempt to

optimistically group them together

until this size is reached.

(default: 16384)

--message-send-max-retries <Integer> Brokers can fail receiving the message

for multiple reasons, and being

unavailable transiently is just one

of them. This property specifies the

number of retires before the

producer give up and drop this

message. (default: 3)

--metadata-expiry-ms <Long: metadata The period of time in milliseconds

expiration interval> after which we force a refresh of

metadata even if we haven't seen any

leadership changes. (default: 300000)

--producer-property <String: A mechanism to pass user-defined

producer_prop> properties in the form key=value to

the producer.

--producer.config <String: config file> Producer config properties file. Note

that [producer-property] takes

precedence over this config.

--property <String: prop> A mechanism to pass user-defined

properties in the form key=value to

the message reader. This allows

custom configuration for a user-

defined message reader.

--request-required-acks <String: The required acks of the producer

request required acks> requests (default: 1)

--request-timeout-ms <Integer: request The ack timeout of the producer

timeout ms> requests. Value must be non-negative

and non-zero (default: 1500)

--retry-backoff-ms <Integer> Before each retry, the producer

refreshes the metadata of relevant

topics. Since leader election takes

a bit of time, this property

specifies the amount of time that

the producer waits before refreshing

the metadata. (default: 100)

--socket-buffer-size <Integer: size> The size of the tcp RECV size.

(default: 102400)

--sync If set message send requests to the

brokers are synchronously, one at a

time as they arrive.

--timeout <Integer: timeout_ms> If set and the producer is running in

asynchronous mode, this gives the

maximum amount of time a message

will queue awaiting sufficient batch

size. The value is given in ms.

(default: 1000)

--topic <String: topic> REQUIRED: The topic id to produce

messages to.- Then start the consumer:

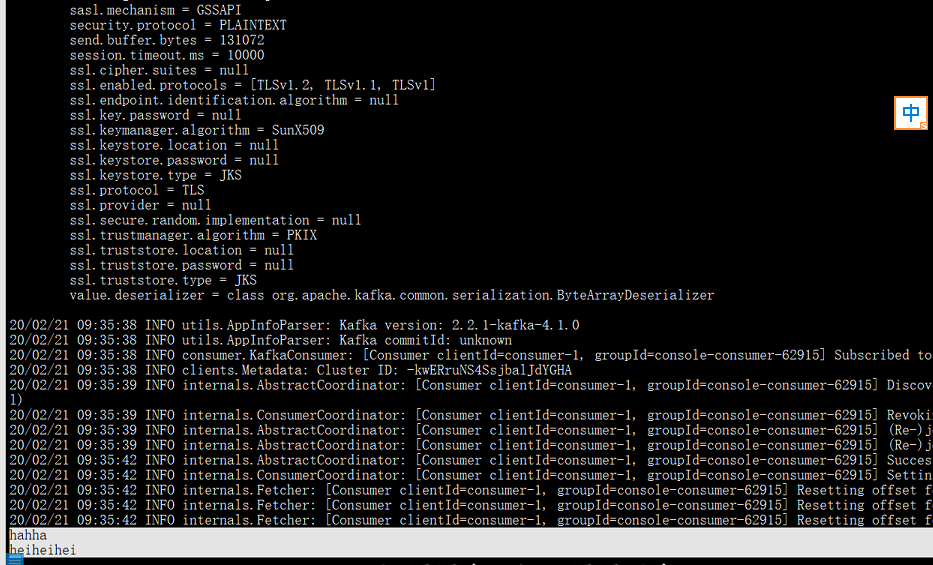

kafka-console-consumer --bootstrap-server cluster2-3:9092 --topic test --from-beginning

The following --from-beginning indicates that all partitions will be consumed starting from a valid starting displacement location in the specified topic.

- Data consumers consume to top:

Instruction parameters for kafka-console-consumer consumers:

Option Description

------ -----------

--bootstrap-server <String: server to REQUIRED: The server(s) to connect to.

connect to>

--consumer-property <String: A mechanism to pass user-defined

consumer_prop> properties in the form key=value to

the consumer.

--consumer.config <String: config file> Consumer config properties file. Note

that [consumer-property] takes

precedence over this config.

--enable-systest-events Log lifecycle events of the consumer

in addition to logging consumed

messages. (This is specific for

system tests.)

--formatter <String: class> The name of a class to use for

formatting kafka messages for

display. (default: kafka.tools.

DefaultMessageFormatter)

--from-beginning If the consumer does not already have

an established offset to consume

from, start with the earliest

message present in the log rather

than the latest message.

--group <String: consumer group id> The consumer group id of the consumer.

--help Print usage information.

--isolation-level <String> Set to read_committed in order to

filter out transactional messages

which are not committed. Set to

read_uncommittedto read all

messages. (default: read_uncommitted)

--key-deserializer <String:

deserializer for key>

--max-messages <Integer: num_messages> The maximum number of messages to

consume before exiting. If not set,

consumption is continual.

--offset <String: consume offset> The offset id to consume from (a non-

negative number), or 'earliest'

which means from beginning, or

'latest' which means from end

(default: latest)

--partition <Integer: partition> The partition to consume from.

Consumption starts from the end of

the partition unless '--offset' is

specified.

--property <String: prop> The properties to initialize the

message formatter. Default

properties include:

print.timestamp=true|false

print.key=true|false

print.value=true|false

key.separator=<key.separator>

line.separator=<line.separator>

key.deserializer=<key.deserializer>

value.deserializer=<value.

deserializer>

Users can also pass in customized

properties for their formatter; more

specifically, users can pass in

properties keyed with 'key.

deserializer.' and 'value.

deserializer.' prefixes to configure

their deserializers.

--skip-message-on-error If there is an error when processing a

message, skip it instead of halt.

--timeout-ms <Integer: timeout_ms> If specified, exit if no message is

available for consumption for the

specified interval.

--topic <String: topic> The topic id to consume on.

--value-deserializer <String:

deserializer for values>

--whitelist <String: whitelist> Regular expression specifying

whitelist of topics to include for

consumption.