1, Introduction to speech processing

1 Characteristics of voice signal

Through the observation and analysis of a large number of voice signals, it is found that voice signals mainly have the following two characteristics:

① In the frequency domain, the spectral components of speech signals are mainly concentrated in the range of 300 ~ 3400Hz. Using this feature, we can use an anti aliasing band-pass filter to take out the frequency component of the speech signal in this range, and then sample the speech signal according to the sampling rate of 8kHz to obtain the discrete speech signal.

② In the time domain, the speech signal has the characteristics of "short-term", that is, in general, the characteristics of the speech signal change with time, but the speech signal remains stable in a short time interval. It shows the characteristics of periodic signal in voiced segment and random noise in voiced segment.

2 voice signal acquisition

Before digitizing the speech signal, anti aliasing pre filtering must be carried out first. The purpose of pre filtering is two: ① suppress all components whose frequency exceeds fs/2 in all fields of input signal guidance (fs is the sampling frequency) to prevent aliasing interference. ② Suppress 50Hz power frequency interference. In this way, the pre filter must be a band-pass filter. If the upper and lower cut-off color ratios are fH and fL respectively, for most human speech coders, fH=3400Hz, fL = 60~100Hz and the sampling rate is fs = 8kHz; For Ding speech recognition, when used for telephone users, the index is the same as that of speech codec. When the application requirements are high or very high, fH = 4500Hz or 8000Hz, fL = 60Hz, fs = 10kHz or 20kHz.

In order to change the original analog speech signal into digital signal, it must go through two steps: sampling and quantization, so as to obtain the digital speech signal which is discrete in time and amplitude. Sampling, also known as sampling, is the discretization of the signal in time, that is, the instantaneous value is taken on the analog signal x(t) point by point according to a certain time interval △ t. When sampling, we must pay attention to the Nyquist theorem, that is, the sampling frequency fs must be sampled at a speed more than twice the highest frequency of the measured signal in order to correctly reconstruct the wave. It is realized by multiplying the sampling pulse and the analog signal.

In the process of sampling, attention should be paid to the selection of sampling interval and signal confusion: for analog signal sampling, the sampling interval should be determined first. How to choose △ t reasonably involves many technical factors that need to be considered. Generally speaking, the higher the sampling frequency, the denser the number of sampling points, and the closer the discrete signal is to the original signal. However, too high sampling frequency is not desirable. For signals with fixed length (T), too much data (N=T / △ T) is collected, which adds unnecessary calculation workload and storage space to the computer; If the amount of data (N) is limited, the sampling time is too short, which will lead to the exclusion of some data information. If the sampling frequency is too low and the sampling points are too far apart, the discrete signal is not enough to reflect the waveform characteristics of the original signal, and the signal cannot be restored, resulting in signal confusion. According to the sampling theorem, when the sampling frequency is greater than twice the bandwidth of the signal, the sampling process will not lose information. The original signal waveform can be reconstructed without distortion from the sampled signal by using the ideal filter. Quantization is to discretize the amplitude, that is, the vibration amplitude is expressed by binary quantization level. The quantization level changes in series, and the actual vibration value is a continuous physical quantity. The specific vibration value is rounded to the nearest quantization level.

After pre filtering and sampling, the speech signal is transformed into two address digital code by A / D converter. This anti aliasing filter is usually made in an integrated block with analog-to-digital converter. Therefore, at present, the digital quality of speech signal is still guaranteed.

After the voice signal is collected, the voice signal needs to be analyzed, such as time domain analysis, spectrum analysis, spectrogram analysis and noise filtering.

3 speech signal analysis technology

Speech signal analysis is the premise and foundation of speech signal processing. Only by analyzing the parameters that can represent the essential characteristics of speech signal, it is possible to use these parameters for efficient speech communication, speech synthesis and speech recognition [8]. Moreover, the sound quality of speech synthesis and the speech recognition rate also depend on the accuracy and accuracy of speech signal bridge. Therefore, speech signal analysis plays an important role in the application of speech signal processing.

It is "the whole process of speech analysis". Because, as a whole, the characteristics of speech signal and the parameters characterizing its essential characteristics change with time, it is an unsteady process, which can not be analyzed and processed by the digital signal processing technology for processing the unstable signal. However, since different speech is a response generated by the movement of human oral muscle forming a certain shape of the vocal tract, and this movement of oral muscle is very slow relative to the speech frequency, on the other hand, although the speech multiple has time-varying characteristics, it is in a short time range (generally considered to be in a short time range of 10 ~ 30ms), Its characteristics remain basically unchanged, that is, relatively stable, because it can be regarded as a quasi steady process, that is, the speech signal has short-term stability. Therefore, the analysis and processing of any speech signal must be based on "short-time", that is, carry out "short-time analysis", divide the speech signal into segments to analyze its characteristic parameters, each segment is called a "frame", and the frame length is generally 10 ~ 30ms. In this way, for the whole speech signal, what is analyzed is the characteristic parameter time series composed of the characteristic parameters of each frame.

According to the different properties of the analyzed parameters, speech signal analysis can be divided into time domain analysis, frequency domain analysis, inverted domain analysis and so on; Time domain analysis method has the advantages of simplicity, small amount of calculation and clear physical meaning. However, because the most important perceptual characteristics of speech signal are reflected in the power spectrum, and the phase change only plays a small role, frequency domain analysis is more important than time domain analysis.

4 time domain analysis of speech signal

The time domain analysis of speech signal is to analyze and extract the time domain parameters of speech signal. When analyzing speech, the first and most intuitive thing is its time domain waveform. Speech signal itself is a time-domain signal, so time-domain analysis is the earliest and most widely used analysis method. This method directly uses the time-domain waveform of speech signal. Time domain analysis is usually used for the most basic parameter analysis and applications, such as speech segmentation, preprocessing, large classification and so on. The characteristics of this analysis method are: ① the speech signal is more intuitive and has clear physical meaning. ② The implementation is relatively simple and less computation. ③ Some important parameters of speech can be obtained. ④ Only general equipment such as oscilloscope is used, which is relatively simple to use.

The time domain parameters of speech signal include short-time energy, short-time zero crossing rate, short-time white correlation function and short-time average amplitude difference function, which are the most basic short-time parameters of speech signal and should be applied in various speech signal digital processing technologies [6]. Square window or Hamming window is generally used in calculating these parameters.

5 frequency domain analysis of speech signal

The frequency domain analysis of speech signal is to analyze the frequency domain characteristics of speech signal. In a broad sense, the frequency domain analysis of speech signal includes the spectrum, power spectrum, cepstrum and spectrum envelope analysis of speech signal, while the commonly used frequency domain analysis methods include band-pass filter bank method, Fourier transform method, line prediction method and so on.

2, Partial source code

function varargout = zonghe(varargin)

% ZONGHE MATLAB code for zonghe.fig

% ZONGHE, by itself, creates a new ZONGHE or raises the existing

% singleton*.

%

% H = ZONGHE returns the handle to a new ZONGHE or the handle to

% the existing singleton*.

%

% ZONGHE('CALLBACK',hObject,eventData,handles,...) calls the local

% function named CALLBACK in ZONGHE.M with the given input arguments.

%

% ZONGHE('Property','Value',...) creates a new ZONGHE or raises the

% existing singleton*. Starting from the left, property value pairs are

% applied to the GUI before zonghe_OpeningFcn gets called. An

% unrecognized property name or invalid value makes property application

% stop. All inputs are passed to zonghe_OpeningFcn via varargin.

%

% *See GUI Options on GUIDE's Tools menu. Choose "GUI allows only one

% instance to run (singleton)".

%

% See also: GUIDE, GUIDATA, GUIHANDLES

% Edit the above text to modify the response to help zonghe

% Last Modified by GUIDE v2.5 23-Jan-2022 22:45:18

% Begin initialization code - DO NOT EDIT

gui_Singleton = 1;

gui_State = struct('gui_Name', mfilename, ...

'gui_Singleton', gui_Singleton, ...

'gui_OpeningFcn', @zonghe_OpeningFcn, ...

'gui_OutputFcn', @zonghe_OutputFcn, ...

'gui_LayoutFcn', [] , ...

'gui_Callback', []);

if nargin && ischar(varargin{1})

gui_State.gui_Callback = str2func(varargin{1});

end

if nargout

[varargout{1:nargout}] = gui_mainfcn(gui_State, varargin{:});

else

gui_mainfcn(gui_State, varargin{:});

end

% End initialization code - DO NOT EDIT

% --- Executes just before zonghe is made visible.

function zonghe_OpeningFcn(hObject, eventdata, handles, varargin)

% This function has no output args, see OutputFcn.

% hObject handle to figure

% eventdata reserved - to be defined in a future version of MATLAB

% handles structure with handles and user data (see GUIDATA)

% varargin command line arguments to zonghe (see VARARGIN)

% Choose default command line output for zonghe

handles.output = hObject;

% Update handles structure

guidata(hObject, handles);

% UIWAIT makes zonghe wait for user response (see UIRESUME)

% uiwait(handles.figure1);

% --- Outputs from this function are returned to the command line.

function varargout = zonghe_OutputFcn(hObject, eventdata, handles)

% varargout cell array for returning output args (see VARARGOUT);

% hObject handle to figure

% eventdata reserved - to be defined in a future version of MATLAB

% handles structure with handles and user data (see GUIDATA)

% Get default command line output from handles structure

varargout{1} = handles.output;

% --- Executes on button press in pushbutton1.

function pushbutton1_Callback(hObject, eventdata, handles)

global aud RecordLength ShowLength FrequencyWindow1 FrequencyWindow2 fs nBits

global mag idx_last recObj myRecording bit%global variable

aud=audiodevinfo; %Sound equipment

%initialization

RecordLength=5; %The recording time is 5 seconds

ShowLength=5; %The length of time displayed is also 5 seconds

FrequencyWindow1=0;%Minimum resolution

FrequencyWindow2=3000;%Maximum resolution

fs=44100;%sampling frequency

nBits=16;%Setting of bytes of recording equipment

mag=1.05;%The display image is 1 of the maximum value.05 times

idx_last=1;%Time regulation

recObj=audiorecorder(fs,nBits,1);%The recording equipment

record(recObj,RecordLength);%sound recording

tic

while toc<.1

end

bit=2;

%Main cycle

while toc<RecordLength

myRecording=getaudiodata(recObj);%Get recording data

idx=round(toc*fs);%Execution time calculation

while idx-idx_last<.1*fs

idx=round(toc*fs);

end

axes(handles.axes1);

plot((max(1,size(myRecording,1)-fs*ShowLength):(2^bit):size(myRecording,1))./fs,myRecording(max(1,size(myRecording,1)-fs*ShowLength):(2^bit):end)) %Draw a picture

mag=max(abs(myRecording));%Maximum value of voice data

%Set display range

ylim([-1.2 1.2]*mag)

xlim([max(0,size(myRecording,1)/fs-ShowLength) max(size(myRecording,1)/fs,ShowLength)])

title('Waveform display of sound signal');

ylabel('Signal level(v)');

xlabel('Sample');

drawnow

idx_last=idx;

end

% hObject handle to pushbutton1 (see GCBO)

% eventdata reserved - to be defined in a future version of MATLAB

% handles structure with handles and user data (see GUIDATA)

% --- Executes on button press in pushbutton2.

function pushbutton2_Callback(hObject, eventdata, handles)

global aud RecordLength ShowLength FrequencyWindow1 FrequencyWindow2 fs nBits

global mag idx_last recObj myRecording

save yinpin.mat aud RecordLength ShowLength FrequencyWindow1 FrequencyWindow2 fs nBits mag idx_last recObj myRecording

load yinpin.mat %Load data

sound(myRecording,fs);%replay

% hObject handle to pushbutton2 (see GCBO)

% eventdata reserved - to be defined in a future version of MATLAB

% handles structure with handles and user data (see GUIDATA)

% --- Executes on button press in pushbutton3.

function pushbutton3_Callback(hObject, eventdata, handles)

global y2 t fs myRecording ShowLength bit

y2=myRecording(max(1,size(myRecording,1)-fs*ShowLength):(2^bit):end);

n=length(y2);

dt=5/n;

t=0:dt:5-dt;

axes(handles.axes1);

% subplot(3,2,2)

plot(t,y2);

title('Time domain waveform');

ylabel('range(v)');

xlabel('Time( s)');

% hObject handle to pushbutton3 (see GCBO)

% eventdata reserved - to be defined in a future version of MATLAB

% handles structure with handles and user data (see GUIDATA)

clear;

figure(5);

Ft=44100;%sampling frequency

Fp=2500;%Stopband digital cutoff frequency

Fs=5000;%Passband digital cutoff frequency

wp=2*pi*Fp/Ft;%Frequency normalization

ws=2*pi*Fs/Ft;

wp1=2*tan(wp/2);%Convert to simulation index

ws1=2*tan(ws/2);

[N,wn]=buttord(wp1,ws1,1,100,'s');%Calculate the filter order and 3 dB cut-off frequency

[b,a]=butter(N,wn,'s');%calculation H(s)Numerator and denominator coefficients of

[bz,az]=bilinear(b,a,Ft);

freqz(bz,az,512,Ft);

%plot(f,20*log10(abs(h)));

%plot(f,abs(h));

%xlabel('frequency(Hz)');

%ylabel('range(dB)')

title('low pass filter ');

grid on;

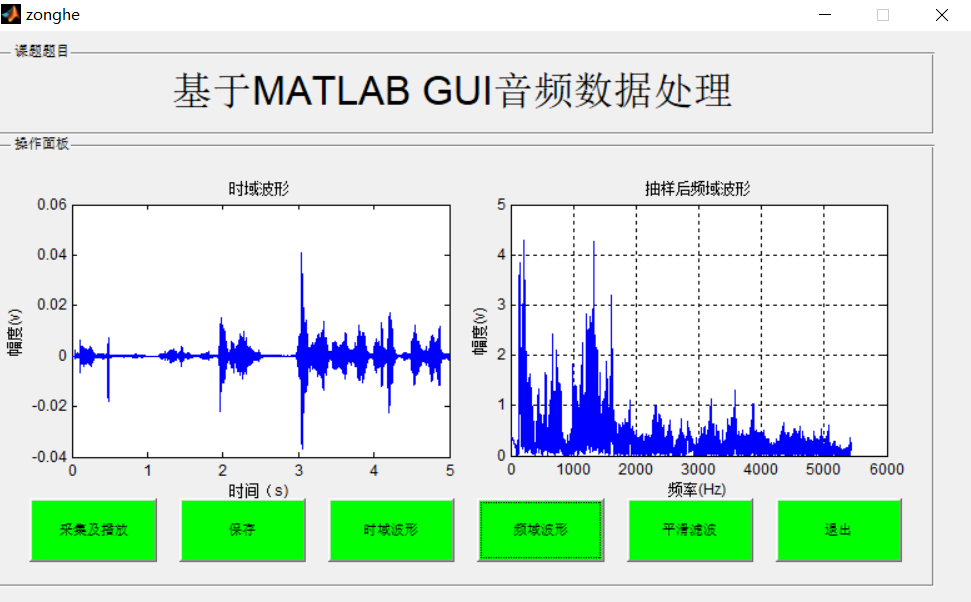

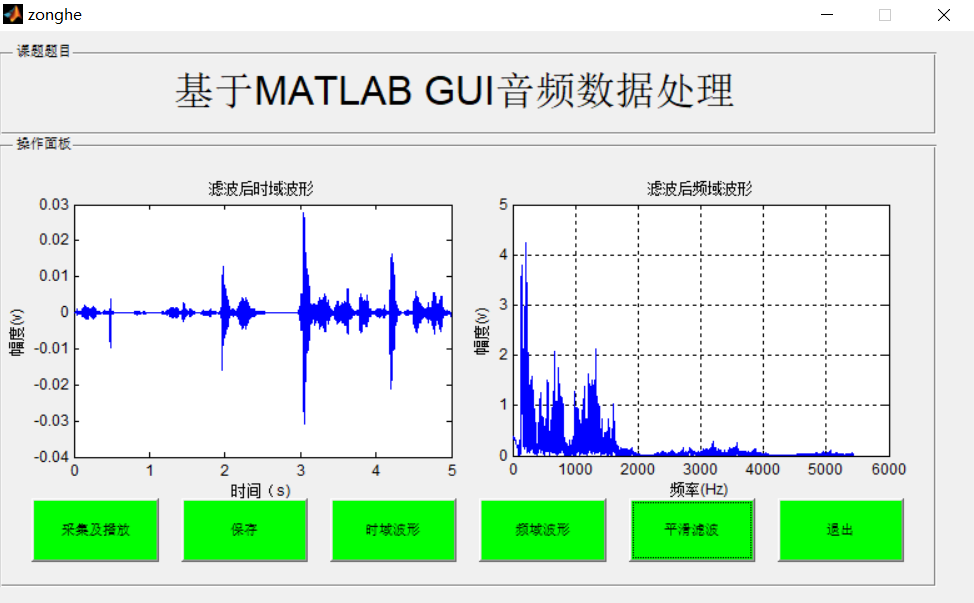

3, Operation results

4, matlab version and references

1 matlab version

2014a

2 references

[1] Han Jiqing, Zhang Lei, Zheng tieran Speech signal processing (3rd Edition) [M] Tsinghua University Press, 2019

[2] Liu ruobian Deep learning: Practice of speech recognition technology [M] Tsinghua University Press, 2019

[3] Song Yunfei, Jiang zhancai, Wei Zhonghua Speech processing interface design based on MATLAB GUI [J] Scientific and technological information 2013,(02)