1. Overview

Bob Dylan is a great American poet and songwriter. His creative works contribute a lot to American culture and even to the culture of the whole world. This paper uses NLTK to extract nouns from Bob Dylan's lyrics, stores his lyrics in a hash table for word segmentation statistics, and visualizes the lyrics with high frequency using matplotlib.

2. Problem Analysis

- During the experiment, we first download Bob Dylan's lyrics from github, then read the lyrics file into python, and extract the nouns from it using functions in NLTK.

- After getting a list of all the nouns, use the hash table to do word frequency statistics for the lyrics.

- A hash table is an efficient way to store word frequencies, which are directly accessible data structures based on key code values.

It can be implemented in python using a dictionary. When we look for the frequency of the word, the time complexity is O(1). - After word frequency statistics, we take out the first 18 nouns with the highest frequency of nouns in the dictionary and print out their charts with the matlibplot library.

3. Experimental Code

// An highlighted block

import nltk

import os

import matplotlib.pyplot as plt

path = "/Users/python/Desktop/BTH004 algorithm analysis/Chinese side/code/pycode/bobdylan/txt" #Folder directory

files= os.listdir(path) #Get all the file names under the folder

nouns = []

for file in files: #traverse folder

position = path+'/'+ file

#print (position)

with open(position, "r",encoding='utf-8') as f: #Open File

lines = f.read()

sentences = nltk.sent_tokenize(lines)

#print("1")

for sentence in sentences:

#print("1")

for word,pos in nltk.pos_tag(nltk.word_tokenize(str(sentence))):

if (pos == 'NN' or pos == 'NNP' or pos == 'NNS' or pos == 'NNPS'):

#print(word)

nouns.append(word)

#print(nouns)

#Statistics word frequency

result = {}

nounsdict = {}

for key in nouns:

if key in nounsdict:

nounsdict[key] = nounsdict[key]+1

else:

nounsdict[key] = 1

#print(type(dict))

del nounsdict['*']

del nounsdict['"']

del nounsdict['>']

del nounsdict['"']

del nounsdict['–']

del nounsdict['<']

nounsdict= sorted(nounsdict.items(), key=lambda asd:asd[1], reverse = True)

nounsdict = nounsdict[0:20]

print(nounsdict)

keys = []

y = []

for i in range(len(nounsdict)):

keys.append(nounsdict[i][0])

y.append(nounsdict[i][1])

x=range(len(keys))

plt.figure()

plt.bar(keys ,y)

plt.xticks(x,keys,rotation=45)

plt.xlabel("words")

plt.ylabel("frequency")

plt.title("the word's frequency statistic of Bob Dylan song")

plt.show()

4. Experimental results

In the first experiment code, the results are sorted according to word frequency, and the following results are obtained. At this time, we found that the results have many articles, prepositions, etc. We need to clean the data.

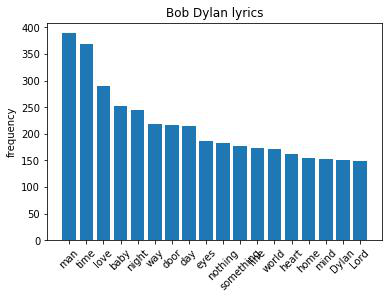

So we rewrote the code, introduced python's nltk natural language processing package, and extracted the nouns from the lyrics. Word frequency statistics are performed and stored in a hash table. Using the matplotlib package to visualize it, we have the following results.

Bob Dylan's music is a tool for his sincere expression of his outlook on life and attitude. In his lyrics, love, world, heart, time and other words appear in a large area, singing about love, loneliness and freedom. His anger was gentle and strong, and his lyrics were beautiful and calm. The action of the Nobel Prize for Literature proves that the most impressive lyrics with great literary expressiveness and influence of the times are the greatest poems and the greatest literary works.

5. Experimental Summary

During the experiment, some problems were encountered, such as:

-

About python reading files

Solution: Use os Library under python -

Most of the data obtained are auxiliaries, prepositions and articles, which have less relative reference value.

Solution: Extract the data, change the word segmentation strategy, only extract the nouns in the lyrics. -

The download() function under nltk cannot be used

Solution: There can be some proxy server issues, manually downloading files and placing them in relative paths to resolve them.

Experiments:

This experiment used many of python's built-in libraries, which made me more familiar with using python. In addition, it's interesting that different libraries have different functions.

Hash tables provide fast insertion and lookup operations with near constant operation time levels regardless of how much data there is in the hash table. They are fast and easy to implement.

Reference and thanks

How to Count the Lyric Frequency of David Bowie

https://blog.csdn.net/weixin_43230147/article/details/109323025.

Extracting noun phrases from NLTK using python http://www.voidcn.com/article/p-fxffnovl-bws.html.

bob_dylan_lyrics Bob Dylan lyrics https://github.com/mulhod/bob_dylan_lyrics.