heap

Heap is a special data structure in computer science. It is usually a complete binary tree (each node has no more than two child nodes, only the lowest layer starts from the right, does not lack or continuously lacks a section of nodes, and other layers are full tree structure).

Nature of heap:

- The value of a node is always not greater than or less than the value of its parent node

- It is usually a complete binary tree

The heap with the largest root node is called large root heap, and the heap with the smallest root node is called small root heap. Common piles include binary pile, Fibonacci pile, etc.

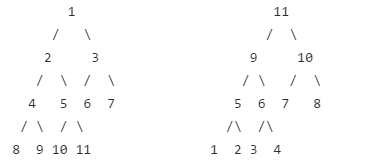

Small root pile on the left and large root pile on the right:

Heap is defined as follows:

\A sequence of (n \) elements \ (\ {A_1,A_2,A_i,\cdots,A_n \} \) is called a heap when it is precise and meets the following conditions:

or

In fact, this is to put the left son of a node \ (i \) in the position of \ (2i \) and the right son in the position of \ (2i+1 \), and then meet the nature of the heap. We also store in this way when we store the heap.

Heap operation

a. Insert: inserts a new element into the heap. Inserts a new node at the end of the array. Then, the child node and parent node are adjusted up from bottom to top. If they do not meet the nature of the heap, they are exchanged so that the current subtree meets the nature of the binary heap. The time complexity is \ (\ mathcal{O}(\log n) \).

void push(int a[], int i, int& n) { //i is the inserted value and n is the size of the heap before insertion

n++; //Resize

a[n] = i;

int p = n;

while (p > 1 && a[p / 2] > a[p]) {

swap(a[p / 2], a[p]);

p /= 2;

}

return;

}

b. Pop up: delete the heap top element. First place the last node stored in the heap at the top of the heap, and then adjust the parent node and child node from bottom to top. The time complexity is \ (\ mathcal{O}(\log n) \).

int pop(int a[], int& n) {

int res = a[1]; //Record top element

a[1] = a[n]; //Swap top and tail elements

n--; //Resize

int p = 1, t;

while (p * 2 <= n) {

if (p * 2 + 1 > n || a[p * 2] <= a[p * 2 + 1]) { //Find the youngest child

t = p * 2;

} else {

t = p * 2 + 1;

}

if (a[p] > a[t]) { //The nature of the heap is not satisfied

swap(a[p], a[t]);

} else {

break;

}

}

return res;

}

c) Delete: exchange this element with the heap tail element, adjust the heap capacity, and then adjust it from top to bottom from the current position of the original heap tail element. The time complexity is \ (\ mathcal{O}(\log n) \).

Big root pile

The following sequences meet the definition of large root heap:

-

7,5,6,2,4,1,5

Each node is not less than the value of the child node

-

7,7,5,7,7,5,5

Each node is not less than the value of the child node

-

7,7,6,8,2,5,3

The relationship between 7 and 8 does not conform to the nature of the heap

-

1,2,3,4,5,6,7

Small root pile

Priority queue

In the queue, elements enter from the end of the queue and are deleted at the head of the queue. Compared with the queue, the elements in the priority queue increase the priority attribute, and the elements with high priority are deleted first. The internal of priority queue is implemented by heap.

There is basically no difference between the use of priority queues and queues.

#include<queue>

#include<iostream>

using namespace std;

int main() {

priority_queue<int> q; //Define a priority queue for storing int types

q.push(1); //Join the team

q.push(2);

q.push(3);

while (!q.empty()) {

cout << q.top() << endl;

q.pop();

}

return 0;

}

Output is:

3 2 1

The priority queue will put the larger value at the head of the queue by default, which is the large root heap. If you want to put the smaller value in the front, you need to define it in the following way:

priority_queue<int, vector<int>, greater<int> > pq;

The meaning is: int is installed in the priority queue. The priority queue is stored in vector. The comparison method is greater. When using the priority queue, the greater method will put the smaller elements at the head of the queue

Alternatively, we can use the priority overload less than sign.

struct node {

int dist, loc;

node() { }

bool operator < (const node & a) const {

return dist > a.dist;

}

};

priority_queue <node> Q;

The above code plays the role of priority overloading. We can still perform convenient operations such as top and pop.

Example:

Enter \ (n \) numbers and sort from large to small. Priority queue must be used.

#include<queue>

#include<iostream>

using namespace std;

priority_queue<int> pq;

int main() {

int n;

cin >> n;

for (int i = 1;i <= n;i++) {

int t;

cin >> t;

pq.push(t);

}

while (!pq.empty()) {

cout << pq.top() << endl;

pq.pop();

}

}

Merge fruit

In an orchard, Duoduo has knocked down all the fruits and divided them into different piles according to different kinds of fruits. Dodo decided to put all the fruit into a pile.

Each time, Duoduo can merge two piles of fruits together, and the consumed physical strength is equal to the sum of the weight of the two piles of fruits. It can be seen that after \ (n-1 \) times of merging, there is only one pile left. The total physical strength consumed by Duoduo in merging fruits is equal to the sum of physical strength consumed in each merging.

Because we have to make great efforts to carry these fruits home, Duoduo should save energy as much as possible when merging fruits. Assuming that the weight of each fruit is \ (1 \), and the number of fruit types and the number of each fruit are known, your task is to design a combined sequence scheme to minimize the physical energy consumed by more and output the minimum physical energy consumption value.

Resolution:

Each time you operate, you should select at least two piles of fruit to merge until there is only one pile of fruit left. Then you can use the small root heap for implementation. Each operation is to take out the quantifiers from the top of the heap, and then add their sum to the answer. The last pile of fruit is the answer.

Its correctness can be proved by this. Draw the merged path into a tree and find that the total force consumed is the number of fruits in this pile multiplied by the length of its path to the root. To minimize the sum of this product, you must merge two piles of the current smallest fruit at a time. Why is it right? Consider exchanging two subtrees. The result is either isomorphic with the previous one, or you must put a larger weight in a deeper position, and a smaller weight in a shallower position. The answer will not be reduced. The tree drawn in this way is called Huffman tree.

#include <queue>

#include <iostream>

using namespace std;

priority_queue<int, vector<int>, greater<int> > pq;

int main() {

int n;

cin >> n;

for (int i = 0;i < n;i++) {

int t;

cin >> t;

pq.push(t);

}

int ans = 0;

while (pq.size() > 1) {

int n1 = pq.top();

pq.pop();

int n2 = pq.top();

pq.pop();

pq.push(n1 + n2);

ans += n1 + n2;

}

cout << ans << endl;

}

n minimum sums

Give two arrays \ (A,B \) of length \ (n \). Take any number from \ (A,B \) and add the two numbers to get \ (n^2 \) sums. Find the smallest \ (n \) of these sums.

We can solve this problem with the help of priority queue. First, sort \ (A,B \) from small to large. Look at the table below.

Table 1: \ (A_1+B_1\le A_1+B_2\le A_1 + B_3\le\cdots\le A_1+B_n \)

Table 2: \ (A_2+B_1\le A_2+B_2\le A_2 + B_3\le\cdots\le A_2+B_n \)

Table 3: \ (A_3+B_1\le A_3+B_2\le A_3 + B_3\le\cdots\le A_3+B_n \)

\(\cdots\)

Table n: \ (A_n+B_1\le A_n+B_2\le A_n + B_3\le\cdots\le A_n+B_n \)

We use a structure \ ((sum,a,b) \) to represent a sum value.

We establish a priority queue \ (q \), and the smaller the \ (sum \) in the queue, the higher the priority. Initially, queue \ ((A[i]+B[1],i,1) \), then take out the structure \ ((sum,a,b) \) from the queue, and then queue the structure \ ((A[a],B[b+1],a,b+1) \). In this way, the structure extracted by repeating \ (n \) times is the smallest \ (n \) sum. At any time, the priority queue can only have \ (n \) elements at most, so the time complexity is \ (\ mathcal{O}(n\log n) \).

#include<iostream>

#include<queue>

#include<vector>

#include<algorithm>

using namespace std;

struct node {

int sum, a, b;

node(){}

node(int ssum, int aa, int bb) {

sum = ssum, a = aa, b = bb;

}

bool operator < (const node& rhs) const {

if (sum != rhs.sum) return sum > rhs.sum;

else return a > rhs.a;

}

};

int a[1010], b[1010];

priority_queue<node> pq;

int main() {

int n;

cin >> n;

for (int i = 1;i <= n;i++) cin >> a[i];

for (int i = 1;i <= n;i++) cin >> b[i];

sort(a + 1, a + n + 1);

sort(b + 1, b + n + 1);

for (int i = 1;i <= n;i++) {

pq.push(node(a[i] + b[1], i, 1));

}

for (int i = 1;i <= n;i++) {

node t = pq.top();

pq.pop();

cout << t.sum << ' ';

if (t.b + 1 <= n) {

pq.push(node(t.a) + b[t.b + 1], t.a, t.b + 1);

}

}

return 0;

}

Mapped binary heap

The heap with mapping function is called bidirectional mapping heap. Heap is also known as binary heap, so it is often called mapped binary heap. Compared with ordinary heap, the core function of mapped binary heap is to support fast search of elements. You can find elements with index \ (id \) within the time complexity of \ (\ mathcal{O}(\log n) \), and carry out subsequent modification or deletion.

The difference between mapped binary heap and ordinary heap is that it does not store values, Instead, store the index corresponding to the data (array subscript). When we need to compare the size of parent-child nodes, we need to compare the keywords corresponding to the two indexes; when we need to exchange parent-child nodes, we need to exchange the indexes of parent-child nodes in the heap. Outside the heap, we also need to store a reverse mapping from the index to the elements in the heap, which is used to retrieve the elements of the specified index in the heap for subsequent modification or modification Delete operation.