kubernetes latest version installation stand-alone version

The k8s cluster consists of Master nodes and Node (Worker) nodes.

Today, I'm here to install kubernetes with only one machine.

1. Install the front environment

[root@k8s-master ~]# cat /etc/redhat-release

CentOS Linux release 7.6.1810 (Core)

[root@k8s-master ~]# uname -a

Linux www 3.10.0-1160.45.1.el7.x86_64 #1 SMP Wed Oct 13 17:20:51 UTC 2021 x86_64 x86_64 x86_64 GNU/Linux

[root@k8s-master ~]# ip add

1: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 52:54:00:46:da:39 brd ff:ff:ff:ff:ff:ff

inet 10.0.4.11/22 brd 10.0.7.255 scope global eth0

2. Modify host name

[root@k8s-master ~]# hostnamectl set-hostname k8s-master && bash

``

3. Add hosts

[root@k8s-master ~]# cat >> /etc/hosts << EOF 10.0.4.11 k8s-master EOF

4. Turn off the firewall and selinux

[root@k8s-master ~]# systemctl stop firewalld [root@k8s-master ~]# systemctl disable firewalld [root@k8s-master ~]# sed -i 's/enforcing/disabled/' /etc/selinux/config # permanent [root@k8s-master ~]# setenforce 0 # temporary

5. Close swap

[root@k8s-master ~]# swapoff -a # temporary [root@k8s-master ~]# sed -i 's/.*swap.*/#&/' /etc/fstab # permanent

6. Deliver the bridged IPv4 traffic to the iptables chain

[root@k8s-master ~]# cat > /etc/sysctl.d/k8s.conf << EOF net.bridge.bridge-nf-call-ip6tables = 1 net.bridge.bridge-nf-call-iptables = 1 EOF [root@k8s-master ~]# sysctl --system # take effect

7. Time synchronization

[root@k8s-master ~]# yum install ntpdate -y [root@k8s-master ~]# ntpdate time.windows.com

8. Install Docker

[root@k8s-master ~]# wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo -O /etc/yum.repos.d/docker-ce.repo [root@k8s-master ~]# systemctl enable docker && systemctl start docker && systemctl status docker [root@k8s-master ~]# docker --version

9. Add accelerator to docker

[root@k8s-master ~]# cat > /etc/docker/daemon.json << EOF

{

"registry-mirrors": ["https://qj799ren.mirror.aliyuncs.com"],

"exec-opts": ["native.cgroupdriver=systemd"],

"log-driver": "json-file",

"log-opts": {

"max-size": "100m"

},

"storage-driver": "overlay2"

}

EOF

[root@k8s-master ~]# systemctl restart docker

10. Add yum source for kubernetes

[root@k8s-master ~]# cat > /etc/yum.repos.d/kubernetes.repo << EOF [kubernetes] name=Kubernetes baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64 enabled=1 gpgcheck=0 repo_gpgcheck=0 gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg EOF

11. Install kubedm, kubelet and kubectl

[root@k8s-master ~]# yum -y install kubelet kubeadm kubectl #The latest version of the current time is V1 twenty-three point one [root@k8s-master ~]# systemctl enable kubelet

12. Deploy Kubernetes Master

[root@k8s-master ~]# kubeadm init --apiserver-advertise-address=10.0.4.11 --image-repository registry.aliyuncs.com/google_containers --service-cidr=10.96.0.0/12 --pod-network-cidr=10.244.0.0/16 --ignore-preflight-errors=all

Parameter Description:

–apiserver-advertise-address=10.0.4.11 this parameter is the IP address of the master host. For example, the IP address of my master host is 10.0 four point one one

–image-repository registry.aliyuncs.com/google_containers is the image address. Since foreign addresses cannot be accessed, the Alibaba cloud warehouse address is used: repository registry aliyuncs. com/google_ containers

–kubernetes-version=v1.17.4 this parameter is the version number of k8s software downloaded

–service-cidr=10.96. The IP address after 0.0/12 is directly applied to 10.96 0.0/12, which can also be applied in future installation. Do not change it

–pod-network-cidr=10.244.0.0/16 k8s the IP segments that can be used by the network between internal pod nodes cannot be written in the same way as service CIDR. If you don't know how to configure them, use this 10.244 0.0/16

– ignore preflight errors = all adding this will ignore the error

After executing the statement, see the following information to indicate that the installation is successful.

Your Kubernetes control-plane has initialized successfully! To start using your cluster, you need to run the following as a regular user: mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config Alternatively, if you are the root user, you can run: export KUBECONFIG=/etc/kubernetes/admin.conf You should now deploy a pod network to the cluster. Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at: https://kubernetes.io/docs/concepts/cluster-administration/addons/ Then you can join any number of worker nodes by running the following on each as root: kubeadm join 10.0.4.11:6443 --token 7aj43b.lsaj1n8xg2kqhs6q \ --discovery-token-ca-cert-hash sha256:b1ac091af23de4ecf339f7ea37973452d53e6e04a28c531bb6534032ea9b6ef7

13. Execute the following statement

[root@k8s-master ~]# mkdir -p $HOME/.kube [root@k8s-master ~]# sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config [root@k8s-master ~]# sudo chown $(id -u):$(id -g) $HOME/.kube/config [root@k8s-master ~]# kubectl get nodes #Node status is NotReady NAME STATUS ROLES AGE VERSION k8s-master NotReady control-plane,master 5m16s v1.23.1

14. Install Pod network plug-in (CNI)

[root@k8s-master ~]# wget https://docs.projectcalico.org/manifests/calico.yaml # I use calico recommended by the official website here

vim calico.yaml # note that the only thing that needs to be modified is calico_ IPV4POOL_ The IP corresponding to CIDR needs to be the IP of your host

kind: ConfigMap

apiVersion: v1

metadata:

name: calico-config

namespace: kube-system

data:

# Typha is disabled.

typha_service_name: "none"

# Configure the backend to use.

calico_backend: "bird"

# Configure the MTU to use

veth_mtu: "1440"

# The CNI network configuration to install on each node. The special

# values in this config will be automatically populated.

cni_network_config: |-

{

"name": "k8s-pod-network",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "calico",

"log_level": "info",

"datastore_type": "kubernetes",

"nodename": "__KUBERNETES_NODE_NAME__",

"mtu": __CNI_MTU__,

"ipam": {

"type": "calico-ipam"

},

"policy": {

"type": "k8s"

},

"kubernetes": {

"kubeconfig": "__KUBECONFIG_FILEPATH__"

}

},

{

"type": "portmap",

"snat": true,

"capabilities": {"portMappings": true}

}

]

}

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: felixconfigurations.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: FelixConfiguration

plural: felixconfigurations

singular: felixconfiguration

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: ipamblocks.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: IPAMBlock

plural: ipamblocks

singular: ipamblock

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: blockaffinities.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: BlockAffinity

plural: blockaffinities

singular: blockaffinity

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: ipamhandles.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: IPAMHandle

plural: ipamhandles

singular: ipamhandle

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: ipamconfigs.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: IPAMConfig

plural: ipamconfigs

singular: ipamconfig

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: bgppeers.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: BGPPeer

plural: bgppeers

singular: bgppeer

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: bgpconfigurations.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: BGPConfiguration

plural: bgpconfigurations

singular: bgpconfiguration

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: ippools.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: IPPool

plural: ippools

singular: ippool

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: hostendpoints.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: HostEndpoint

plural: hostendpoints

singular: hostendpoint

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: clusterinformations.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: ClusterInformation

plural: clusterinformations

singular: clusterinformation

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: globalnetworkpolicies.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: GlobalNetworkPolicy

plural: globalnetworkpolicies

singular: globalnetworkpolicy

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: globalnetworksets.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: GlobalNetworkSet

plural: globalnetworksets

singular: globalnetworkset

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: networkpolicies.crd.projectcalico.org

spec:

scope: Namespaced

group: crd.projectcalico.org

version: v1

names:

kind: NetworkPolicy

plural: networkpolicies

singular: networkpolicy

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: networksets.crd.projectcalico.org

spec:

scope: Namespaced

group: crd.projectcalico.org

version: v1

names:

kind: NetworkSet

plural: networksets

singular: networkset

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: calico-kube-controllers

rules:

# Nodes are watched to monitor for deletions.

- apiGroups: [""]

resources:

- nodes

verbs:

- watch

- list

- get

# Pods are queried to check for existence.

- apiGroups: [""]

resources:

- pods

verbs:

- get

# IPAM resources are manipulated when nodes are deleted.

- apiGroups: ["crd.projectcalico.org"]

resources:

- ippools

verbs:

- list

- apiGroups: ["crd.projectcalico.org"]

resources:

- blockaffinities

- ipamblocks

- ipamhandles

verbs:

- get

- list

- create

- update

- delete

# Needs access to update clusterinformations.

- apiGroups: ["crd.projectcalico.org"]

resources:

- clusterinformations

verbs:

- get

- create

- update

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: calico-kube-controllers

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: calico-kube-controllers

subjects:

- kind: ServiceAccount

name: calico-kube-controllers

namespace: kube-system

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: calico-node

rules:

# The CNI plugin needs to get pods, nodes, and namespaces.

- apiGroups: [""]

resources:

- pods

- nodes

- namespaces

verbs:

- get

- apiGroups: [""]

resources:

- endpoints

- services

verbs:

# Used to discover service IPs for advertisement.

- watch

- list

# Used to discover Typhas.

- get

- apiGroups: [""]

resources:

- nodes/status

verbs:

# Needed for clearing NodeNetworkUnavailable flag.

- patch

# Calico stores some configuration information in node annotations.

- update

# Watch for changes to Kubernetes NetworkPolicies.

- apiGroups: ["networking.k8s.io"]

resources:

- networkpolicies

verbs:

- watch

- list

# Used by Calico for policy information.

- apiGroups: [""]

resources:

- pods

- namespaces

- serviceaccounts

verbs:

- list

- watch

# The CNI plugin patches pods/status.

- apiGroups: [""]

resources:

- pods/status

verbs:

- patch

# Calico monitors various CRDs for config.

- apiGroups: ["crd.projectcalico.org"]

resources:

- globalfelixconfigs

- felixconfigurations

- bgppeers

- globalbgpconfigs

- bgpconfigurations

- ippools

- ipamblocks

- globalnetworkpolicies

- globalnetworksets

- networkpolicies

- networksets

- clusterinformations

- hostendpoints

- blockaffinities

verbs:

- get

- list

- watch

# Calico must create and update some CRDs on startup.

- apiGroups: ["crd.projectcalico.org"]

resources:

- ippools

- felixconfigurations

- clusterinformations

verbs:

- create

- update

# Calico stores some configuration information on the node.

- apiGroups: [""]

resources:

- nodes

verbs:

- get

- list

- watch

# These permissions are only requried for upgrade from v2.6, and can

# be removed after upgrade or on fresh installations.

- apiGroups: ["crd.projectcalico.org"]

resources:

- bgpconfigurations

- bgppeers

verbs:

- create

- update

# These permissions are required for Calico CNI to perform IPAM allocations.

- apiGroups: ["crd.projectcalico.org"]

resources:

- blockaffinities

- ipamblocks

- ipamhandles

verbs:

- get

- list

- create

- update

- delete

- apiGroups: ["crd.projectcalico.org"]

resources:

- ipamconfigs

verbs:

- get

# Block affinities must also be watchable by confd for route aggregation.

- apiGroups: ["crd.projectcalico.org"]

resources:

- blockaffinities

verbs:

- watch

# The Calico IPAM migration needs to get daemonsets. These permissions can be

# removed if not upgrading from an installation using host-local IPAM.

- apiGroups: ["apps"]

resources:

- daemonsets

verbs:

- get

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: calico-node

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: calico-node

subjects:

- kind: ServiceAccount

name: calico-node

namespace: kube-system

---

kind: DaemonSet

apiVersion: apps/v1

metadata:

name: calico-node

namespace: kube-system

labels:

k8s-app: calico-node

spec:

selector:

matchLabels:

k8s-app: calico-node

updateStrategy:

type: RollingUpdate

rollingUpdate:

maxUnavailable: 1

template:

metadata:

labels:

k8s-app: calico-node

annotations:

# This, along with the CriticalAddonsOnly toleration below,

# marks the pod as a critical add-on, ensuring it gets

# priority scheduling and that its resources are reserved

# if it ever gets evicted.

scheduler.alpha.kubernetes.io/critical-pod: ''

spec:

nodeSelector:

beta.kubernetes.io/os: linux

hostNetwork: true

tolerations:

# Make sure calico-node gets scheduled on all nodes.

- effect: NoSchedule

operator: Exists

# Mark the pod as a critical add-on for rescheduling.

- key: CriticalAddonsOnly

operator: Exists

- effect: NoExecute

operator: Exists

serviceAccountName: calico-node

# Minimize downtime during a rolling upgrade or deletion; tell Kubernetes to do a "force

# deletion": https://kubernetes.io/docs/concepts/workloads/pods/pod/#termination-of-pods.

terminationGracePeriodSeconds: 0

priorityClassName: system-node-critical

initContainers:

# This container performs upgrade from host-local IPAM to calico-ipam.

# It can be deleted if this is a fresh installation, or if you have already

# upgraded to use calico-ipam.

- name: upgrade-ipam

image: calico/cni:v3.11.3

command: ["/opt/cni/bin/calico-ipam", "-upgrade"]

env:

- name: KUBERNETES_NODE_NAME

valueFrom:

fieldRef:

fieldPath: spec.nodeName

- name: CALICO_NETWORKING_BACKEND

valueFrom:

configMapKeyRef:

name: calico-config

key: calico_backend

volumeMounts:

- mountPath: /var/lib/cni/networks

name: host-local-net-dir

- mountPath: /host/opt/cni/bin

name: cni-bin-dir

securityContext:

privileged: true

# This container installs the CNI binaries

# and CNI network config file on each node.

- name: install-cni

image: calico/cni:v3.11.3

command: ["/install-cni.sh"]

env:

# Name of the CNI config file to create.

- name: CNI_CONF_NAME

value: "10-calico.conflist"

# The CNI network config to install on each node.

- name: CNI_NETWORK_CONFIG

valueFrom:

configMapKeyRef:

name: calico-config

key: cni_network_config

# Set the hostname based on the k8s node name.

- name: KUBERNETES_NODE_NAME

valueFrom:

fieldRef:

fieldPath: spec.nodeName

# CNI MTU Config variable

- name: CNI_MTU

valueFrom:

configMapKeyRef:

name: calico-config

key: veth_mtu

# Prevents the container from sleeping forever.

- name: SLEEP

value: "false"

volumeMounts:

- mountPath: /host/opt/cni/bin

name: cni-bin-dir

- mountPath: /host/etc/cni/net.d

name: cni-net-dir

securityContext:

privileged: true

# Adds a Flex Volume Driver that creates a per-pod Unix Domain Socket to allow Dikastes

# to communicate with Felix over the Policy Sync API.

- name: flexvol-driver

image: calico/pod2daemon-flexvol:v3.11.3

volumeMounts:

- name: flexvol-driver-host

mountPath: /host/driver

securityContext:

privileged: true

containers:

# Runs calico-node container on each Kubernetes node. This

# container programs network policy and routes on each

# host.

- name: calico-node

image: calico/node:v3.11.3

env:

# Use Kubernetes API as the backing datastore.

- name: DATASTORE_TYPE

value: "kubernetes"

# Wait for the datastore.

- name: WAIT_FOR_DATASTORE

value: "true"

# Set based on the k8s node name.

- name: NODENAME

valueFrom:

fieldRef:

fieldPath: spec.nodeName

# Choose the backend to use.

- name: CALICO_NETWORKING_BACKEND

valueFrom:

configMapKeyRef:

name: calico-config

key: calico_backend

# Cluster type to identify the deployment type

- name: CLUSTER_TYPE

value: "k8s,bgp"

# Auto-detect the BGP IP address.

- name: IP

value: "autodetect"

# Enable IPIP

- name: CALICO_IPV4POOL_IPIP

value: "Always"

# Set MTU for tunnel device used if ipip is enabled

- name: FELIX_IPINIPMTU

valueFrom:

configMapKeyRef:

name: calico-config

key: veth_mtu

# The default IPv4 pool to create on startup if none exists. Pod IPs will be

# chosen from this range. Changing this value after installation will have

# no effect. This should fall within `--cluster-cidr`.

- name: CALICO_IPV4POOL_CIDR

value: "10.244.0.0/16"

# Disable file logging so `kubectl logs` works.

- name: CALICO_DISABLE_FILE_LOGGING

value: "true"

# Set Felix endpoint to host default action to ACCEPT.

- name: FELIX_DEFAULTENDPOINTTOHOSTACTION

value: "ACCEPT"

# Disable IPv6 on Kubernetes.

- name: FELIX_IPV6SUPPORT

value: "false"

# Set Felix logging to "info"

- name: FELIX_LOGSEVERITYSCREEN

value: "info"

- name: FELIX_HEALTHENABLED

value: "true"

securityContext:

privileged: true

resources:

requests:

cpu: 250m

livenessProbe:

exec:

command:

- /bin/calico-node

- -felix-live

- -bird-live

periodSeconds: 10

initialDelaySeconds: 10

failureThreshold: 6

readinessProbe:

exec:

command:

- /bin/calico-node

- -felix-ready

- -bird-ready

periodSeconds: 10

volumeMounts:

- mountPath: /lib/modules

name: lib-modules

readOnly: true

- mountPath: /run/xtables.lock

name: xtables-lock

readOnly: false

- mountPath: /var/run/calico

name: var-run-calico

readOnly: false

- mountPath: /var/lib/calico

name: var-lib-calico

readOnly: false

- name: policysync

mountPath: /var/run/nodeagent

volumes:

# Used by calico-node.

- name: lib-modules

hostPath:

path: /lib/modules

- name: var-run-calico

hostPath:

path: /var/run/calico

- name: var-lib-calico

hostPath:

path: /var/lib/calico

- name: xtables-lock

hostPath:

path: /run/xtables.lock

type: FileOrCreate

# Used to install CNI.

- name: cni-bin-dir

hostPath:

path: /opt/cni/bin

- name: cni-net-dir

hostPath:

path: /etc/cni/net.d

# Mount in the directory for host-local IPAM allocations. This is

# used when upgrading from host-local to calico-ipam, and can be removed

# if not using the upgrade-ipam init container.

- name: host-local-net-dir

hostPath:

path: /var/lib/cni/networks

# Used to create per-pod Unix Domain Sockets

- name: policysync

hostPath:

type: DirectoryOrCreate

path: /var/run/nodeagent

# Used to install Flex Volume Driver

- name: flexvol-driver-host

hostPath:

type: DirectoryOrCreate

path: /usr/libexec/kubernetes/kubelet-plugins/volume/exec/nodeagent~uds

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: calico-node

namespace: kube-system

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: calico-kube-controllers

namespace: kube-system

labels:

k8s-app: calico-kube-controllers

spec:

# The controllers can only have a single active instance.

replicas: 1

selector:

matchLabels:

k8s-app: calico-kube-controllers

strategy:

type: Recreate

template:

metadata:

name: calico-kube-controllers

namespace: kube-system

labels:

k8s-app: calico-kube-controllers

annotations:

scheduler.alpha.kubernetes.io/critical-pod: ''

spec:

nodeSelector:

beta.kubernetes.io/os: linux

tolerations:

# Mark the pod as a critical add-on for rescheduling.

- key: CriticalAddonsOnly

operator: Exists

- key: node-role.kubernetes.io/master

effect: NoSchedule

serviceAccountName: calico-kube-controllers

priorityClassName: system-cluster-critical

containers:

- name: calico-kube-controllers

image: calico/kube-controllers:v3.11.3

env:

# Choose which controllers to run.

- name: ENABLED_CONTROLLERS

value: node

- name: DATASTORE_TYPE

value: kubernetes

readinessProbe:

exec:

command:

- /usr/bin/check-status

- -r

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: calico-kube-controllers

namespace: kube-system

Note: after downloading, you need to modify the definition of Pod network (CALICO_IPV4POOL_CIDR), which is the same as that specified in -- Pod network CIDR of kubedm init. - Pod network CIDR = 10.244.0.0/16

[root@k8s-master ~]# kubectl apply -f calico.yaml

15. Verify network

[root@k8s-master ~]# kubectl get nodes #Seeing Ready indicates that the network is normal NAME STATUS ROLES AGE VERSION k8s-master Ready control-plane,master 78m v1.23.1 [root@k8s-master ~]# kubectl get pods -n kube-system #It's right to show all Running NAME READY STATUS RESTARTS AGE calico-kube-controllers-84564464dc-5rhvm 1/1 Running 0 9m16s calico-node-ljzrj 1/1 Running 0 9m16s coredns-6d8c4cb4d-dcth5 1/1 Running 0 80m coredns-6d8c4cb4d-x8v6w 1/1 Running 0 80m etcd-k8s-master 1/1 Running 0 80m kube-apiserver-k8s-master 1/1 Running 0 80m kube-controller-manager-k8s-master 1/1 Running 0 80m kube-proxy-8q4lx 1/1 Running 0 80m kube-scheduler-k8s-master 1/1 Running 0 80m

16. After the k8s installation of the single version, the service cannot be deployed.

Because default master Cannot deploy pod,Stain, You need to remove the stain or add a new one node,I'm here to remove the stain. [root@k8s-master ~]# kubectl get node -o yaml | grep taint -A 5 #After execution, the output description is stained [root@k8s-master ~]# kubectl taint nodes --all node-role.kubernetes.io/master- #Just execute this sentence, that is, remove the stain

17. Install the complete command package

[root@k8s-master ~]# yum -y install bash-completion #Install the complete command package [root@k8s-master ~]# kubectl completion bash [root@k8s-master ~]# source /usr/share/bash-completion/bash_completion [root@k8s-master ~]# kubectl completion bash >/etc/profile.d/kubectl.sh [root@k8s-master ~]# source /etc/profile.d/kubectl.sh [root@k8s-master ~]# cat >> /root/.bashrc <<EOF source /etc/profile.d/kubectl.sh EOF

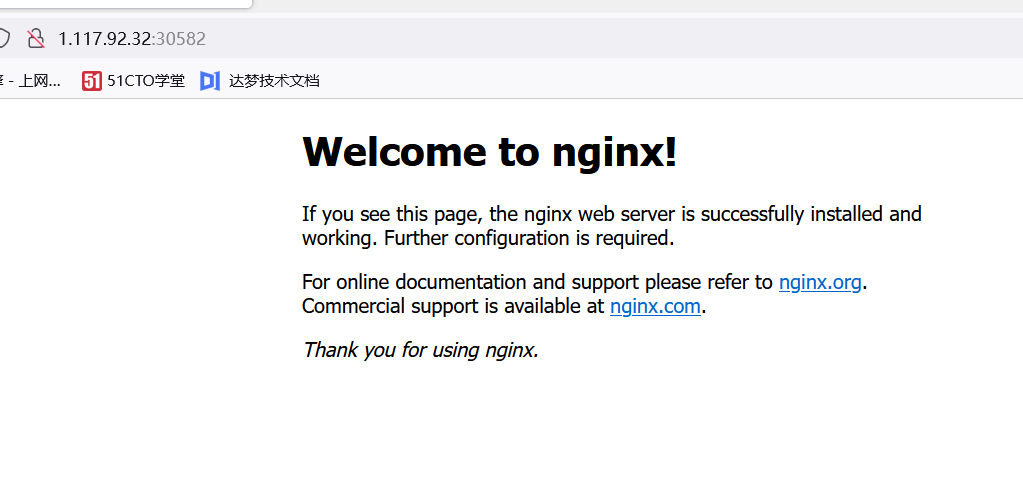

18. Test kubernetes cluster

Deploy an Nginx in the Kubernetes cluster:

[root@k8s-master ~]# kubectl create deployment nginx --image=nginx [root@k8s-master ~]# kubectl expose deployment nginx --port=80 --type=NodePort [root@k8s-master ~]# kubectl get pods,svc NAME READY STATUS RESTARTS AGE pod/nginx-85b98978db-sp968 1/1 Running 0 28s NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE service/kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 88m service/nginx NodePort 10.103.251.155 <none> 80:30582/TCP 26s

Note: port 30582 is exposed

Because this is Tencent's public cloud, I can access nginx using the external network