Artificial Neural Network is a new computing system with self-learning ability. It is widely used in many fields, such as pattern recognition, function approximation and loan risk assessment. The research on neural network has always been a hot direction today. After self-learning an introductory book on neural network, Lan Lan proposed a simplified model. He hopes you can help him test the practicability of this neural network model with programs.

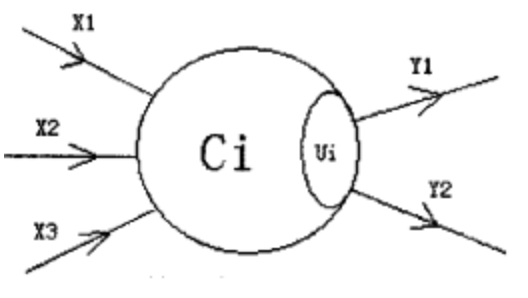

In LAN LAN's model, the neural network is a directed graph. The nodes in the graph are called neurons, and at most one edge is connected between two neurons. The following figure is an example of a neuron:

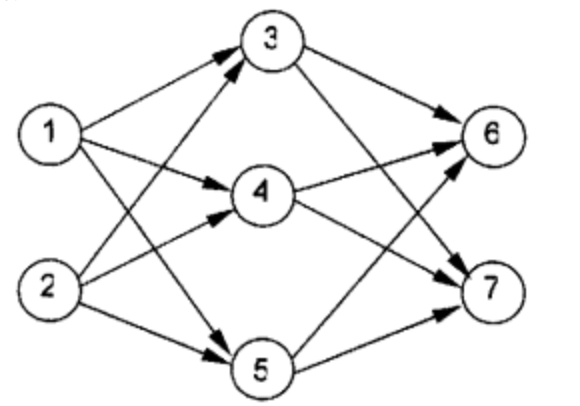

In the figure, X_1-X_3X1 − X3 is the information input channel, Y_1-Y_2Y1 − Y2 is the information output channel, C_1C1 indicates the current state of neurons, U_iUi # is the threshold, which can be regarded as an internal parameter of neurons. Neurons are arranged in a certain order to form the whole neural network. In LAN LAN's model, the neural network is divided into several layers; It is called input layer, output layer, and several intermediate layers. Each layer of neurons only outputs information to the next layer of neurons, and only receives information from the upper layer of neurons. The following figure is an example of a simple three-layer neural network.

Lanlan regulation, C_iCi obeys formula: C_i = \sum_{(j,i) \in E}W_{ji}C_j - U_iCi = ∑ (J, I) ∈ e Wji Cj − Ui (where nn is the number of all neurons in the network)

W in the formula_ {Ji} wji (possibly negative) represents the weight of the edge connecting the {jj} neuron and the {ii} neuron. When C_ When ICI ﹤ is greater than ﹤ 00 ﹤ the neuron is in an excited state, otherwise it is in a calm state. When a neuron is excited, it will send a signal to other neurons in the next second, and the intensity of the signal is C_iCi.

In this way, after the input layer neurons are excited, the whole network system operates under the promotion of information transmission. Now, given a neural network and the current state of neurons in the input layer (C_iCi), your program is required to calculate the state of the final network output layer.

Input format

The first line is the two integers ﹐ nn (1 \le n \le 1001 ≤ n ≤ 100) and ﹐ pp.

Next, line # nn, two integers in each line. Line # i+1i+1 # is the initial state of neuron i and its threshold (U_iUi). At the beginning, the state of neurons in non input layer must be # 00.

Next, there are # PP # lines. Each line consists of two integers # ii, jj # and an integer # W_{ij}Wij indicates that the edge weight connecting neurons {ii and jj} is {W_{ij}Wij.

Output format

It contains several lines, each line has two integers, which correspond to the number of a neuron and its final state respectively. The two integers are separated by spaces.

Only output the neuron state of the output layer whose last state is non-zero, and output it in the order of number from small to large!

If the final state of neurons in the output layer is {00, NULL is output.

Sample 1

| Inputcopy | Outputcopy |

|---|---|

5 6 1 0 1 0 0 1 0 1 0 1 1 3 1 1 4 1 1 5 1 2 3 1 2 4 1 2 5 1 | 3 1 4 1 5 1 |

#include <iostream>

#include <queue>

using namespace std;

const int MAXN = 110;

int net[MAXN][MAXN],link[MAXN][MAXN];

struct info{

int c,u,in,out;

};

info node[MAXN];

int main()

{

queue<int> q;

int N,E,c,u,t1,t2,w;

scanf("%d %d",&N,&E);

for(int i = 1;i <= N;i++){

scanf("%d %d",&c,&u);

if(c <= 0) c -= u;

node[i].c = c;

node[i].u = u;

}

for(int i = 1;i <= E;i++){

scanf("%d %d %d",&t1,&t2,&w);

net[t1][t2] = w;

link[t1][t2] = 1;

node[t2].in++;

node[t1].out++;

}

for(int i = 1;i <= N;i++){

if(node[i].in == 0)

q.push(i);

}

while(!q.empty()){

int temp = q.front();

q.pop();

for(int i = 1;i <= N;i++){

if(link[temp][i]){

node[i].in--;

if(node[temp].c > 0) node[i].c += net[temp][i] * node[temp].c;

if(node[i].in == 0 &&node[i].out > 0){

q.push(i);

}

}

}

}

int cnt = 0;

for(int i = 1;i <= N;i++){

if(node[i].out == 0 && node[i].c > 0){

printf("%d %d\n",i,node[i].c);

cnt++;

}

}

if(cnt == 0) printf("NULL");

return 0;

}