11, Redis master-slave replication

11.1 general

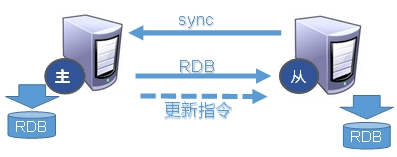

After the host data is updated, it is automatically synchronized to the Master / Slave mechanism of the standby machine according to the configuration and policy. The Master is mainly write and the Slave is mainly read

effect

- Read write separation, performance expansion

- Disaster recovery and rapid recovery

11.2 build master-slave replication

- Create the folder myredis in the root directory, copy the redis configuration file, and turn off aof persistence

- Create three files redis6379 conf,redis6381.conf,redis6380.conf contents are as follows

######################redis6379.conf####################### include /myredis/redis.conf pidfile /var/run/redis_6379.pid port 6379 dbfilename dump6379.rdb ######################redis6380.conf####################### include /myredis/redis.conf pidfile /var/run/redis_6380.pid port 6380 dbfilename dump6380.rdb ######################redis6381.conf####################### include /myredis/redis.conf pidfile /var/run/redis_6381.pid port 6381 dbfilename dump6381.rdb

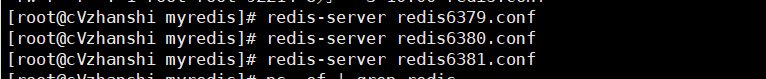

- Start redis on three ports at the same time

- Check whether the service is started

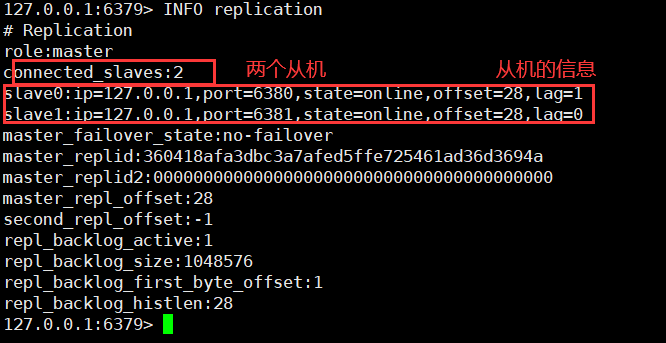

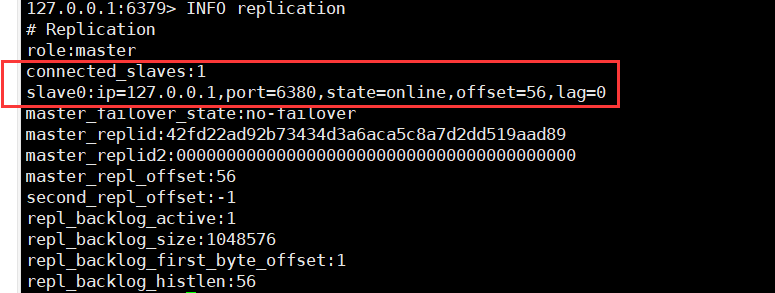

- Check the operation of the three hosts

info replication # Print master-slave copy related information

- With slave (Library) but not master (Library)

slaveof <ip><port> # Configure the ip and port of the host on the slave, and execute on 6380 and 6381: slaveof 127.0 0.1 6379

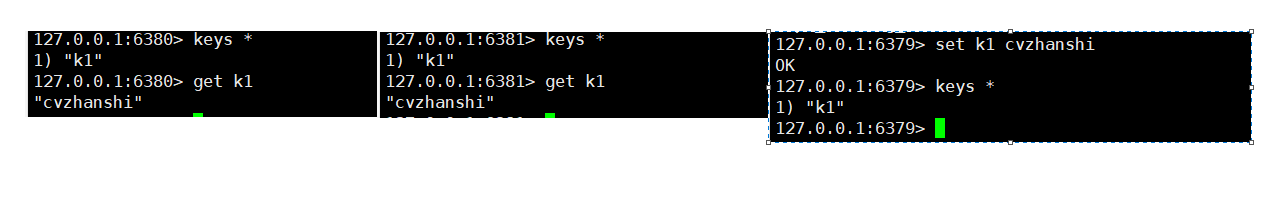

- Write on the host, read data on the slave, and write data on the slave to report an error

- The host hangs up and restarts. Everything is the same. The slave restart needs to be reset: slaveof 127.0 0.1 6379

11.3 common 3 strokes

11.3. One master and two servants

- Entry point problem? Are slave1 and slave2 copied from scratch or from the pointcut? For example, from k4, can the previous K1, K2 and K3 also be copied?

Copy the contents of the host in full from the opportunity, and K1, K2, K3 and K4 will be copied

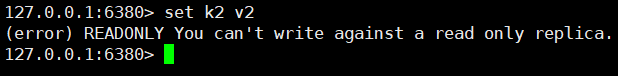

- Can the slave write? Can set?

The slave is only readable, not writable

- What happens after the host shuts down? Is the slave up or standing by?

After the host is shut down, the slave is on standby, waiting for the host to restart, and everything returns to normal

- After the host comes back, the host adds new records. Can the slave copy smoothly?

It can be copied, because after the host restarts, as before, the content written by the host will be synchronized to the slave

- What happens when one of the slaves goes down? According to the original, can it keep up with the big army?

After the slave machine is down, it will be separated from the large force. If you restart and want to synchronize the host content, you need to re execute the command

slaveof

Replication principle

-

After Slave is successfully started and connected to the master, it will send a sync command

-

After receiving the command, the master starts the background save process and collects all the commands received to modify the dataset. After the background process is executed, the master will transfer the entire data file to the slave to complete a complete synchronization

-

Full copy: after receiving the database file data, the slave service saves it and loads it into memory.

-

Incremental replication: the Master continues to transmit all new collected modification commands to the slave in turn to complete the synchronization

-

However, as long as the master is reconnected, a full synchronization (full replication) will be performed automatically

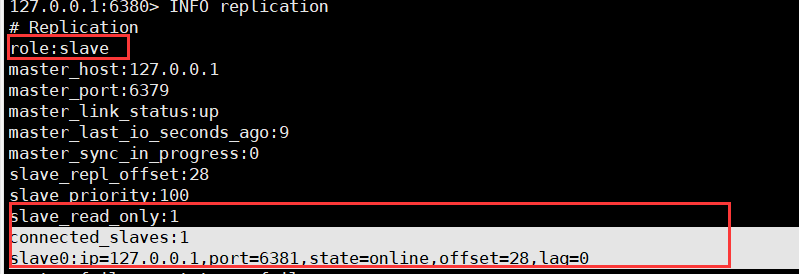

11.3. 2. From generation to generation

-

The previous slave can be the master of the next slave. The slave can also receive connection and synchronization requests from other slaves. Then the slave acts as the next master in the chain, which can effectively reduce the write pressure of the master, decentralize and reduce the risk.

-

slaveof

Change in the middle: the previous data will be cleared and the latest copy will be re established

The risk is that once a slave goes down, the subsequent slave cannot be backed up

The host hangs up, the slave or the slave cannot write data

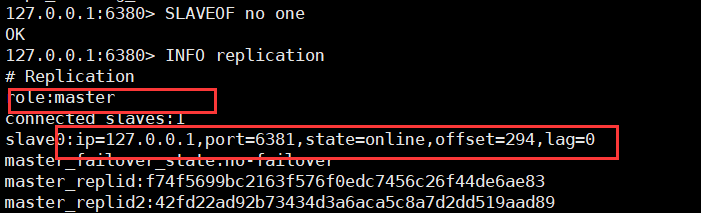

11.3. 3. Anti guest oriented

When a Master goes down, the subsequent slave can be immediately upgraded to master without any modification.

Change slave to master with slave of no one

- 6379down

- Let 6380 turn away from the guest

11.4 sentinel mode

The anti guest based automatic version can monitor whether the host fails in the background. If it fails, it will automatically convert from the library to the main library according to the number of votes

example

- First build a master-slave environment

- Create a new sentinel in the customized / myredis directory Conf file, the name must not be wrong

- Configure sentinels and fill in the contents

sentinel monitor mymaster 127.0.0.1 6379 1 #Where mymaster is the server name of the monitoring object, and 1 is the number of sentinels agreeing to migrate.

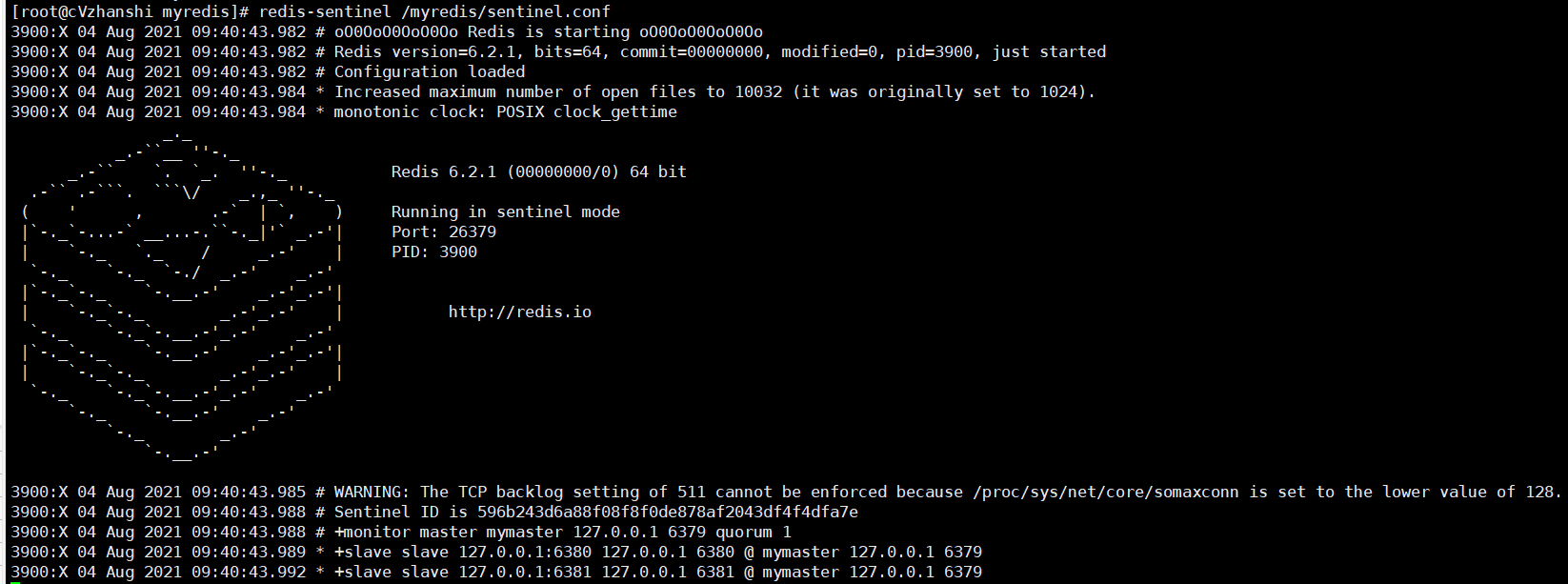

- Start the sentry and execute redis sentinel / myredis / sentinel conf

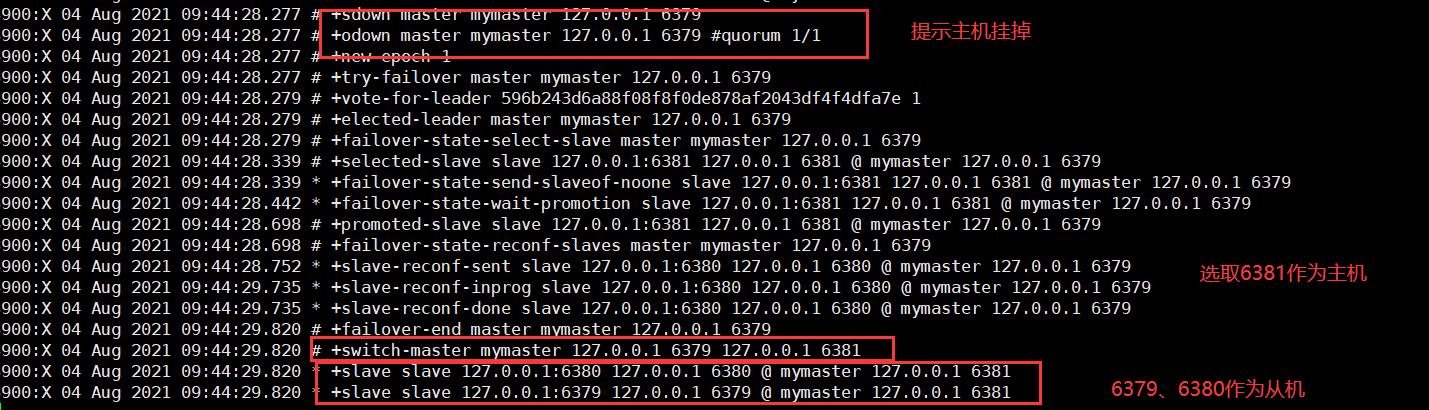

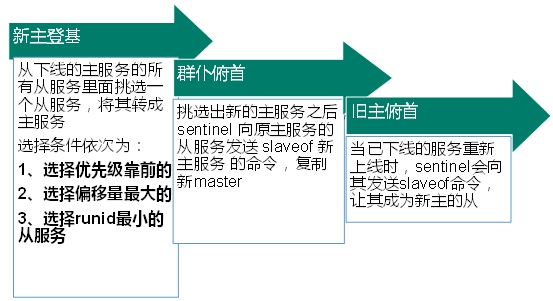

- When the host hangs up, a new host is generated from the host election

- Restart the original host. After the original host is restarted, it will become a slave

Replication delay

Since all write operations are performed on the Master first and then synchronously updated to the Slave, there is a certain delay in synchronizing from the Master to the Slave machine. When the system is very busy, the delay problem will become more serious, and the increase in the number of Slave machines will also make this problem more serious.

Fault recovery

The priority is redis Conf defaults to slave priority 100. The smaller the value, the higher the priority

Offset refers to the most complete data obtained from the original host

After each redis instance is started, a 40 bit runid will be randomly generated

Master slave replication

The Jedis object is obtained using this method

private static JedisSentinelPool jedisSentinelPool=null;

public static Jedis getJedisFromSentinel(){

if(jedisSentinelPool==null){

Set<String> sentinelSet=new HashSet<>();

sentinelSet.add("192.168.11.103:26379");

JedisPoolConfig jedisPoolConfig =new JedisPoolConfig();

jedisPoolConfig.setMaxTotal(10); //Maximum number of available connections

jedisPoolConfig.setMaxIdle(5); //Maximum idle connections

jedisPoolConfig.setMinIdle(5); //Minimum number of idle connections

jedisPoolConfig.setBlockWhenExhausted(true); //Connection exhausted wait

jedisPoolConfig.setMaxWaitMillis(2000); //waiting time

jedisPoolConfig.setTestOnBorrow(true); //Test the connection ping pong

jedisSentinelPool=new JedisSentinelPool("mymaster",sentinelSet,jedisPoolConfig);

return jedisSentinelPool.getResource();

}else{

return jedisSentinelPool.getResource();

}

}

12, Redis cluster

12.1 introduction to cluster

Problems encountered before clustering

1. How can redis expand if the capacity is insufficient?

2. How does redis allocate concurrent write operations?

3. In addition, the master-slave mode, firewood transmission mode and host downtime lead to changes in the ip address. The configuration in the application needs to modify the corresponding host address, port and other information.

Previously, it was solved through the proxy host, but redis3 Solutions are available in 0. That is, decentralized cluster configuration.

Cluster overview

The Redis cluster realizes the horizontal expansion of Redis, that is, start n Redis nodes, distribute and store the whole database in these n nodes, and each node stores 1/N of the total data.

Redis cluster provides a certain degree of availability through partition: even if some nodes in the cluster fail or cannot communicate, the cluster can continue to process command requests.

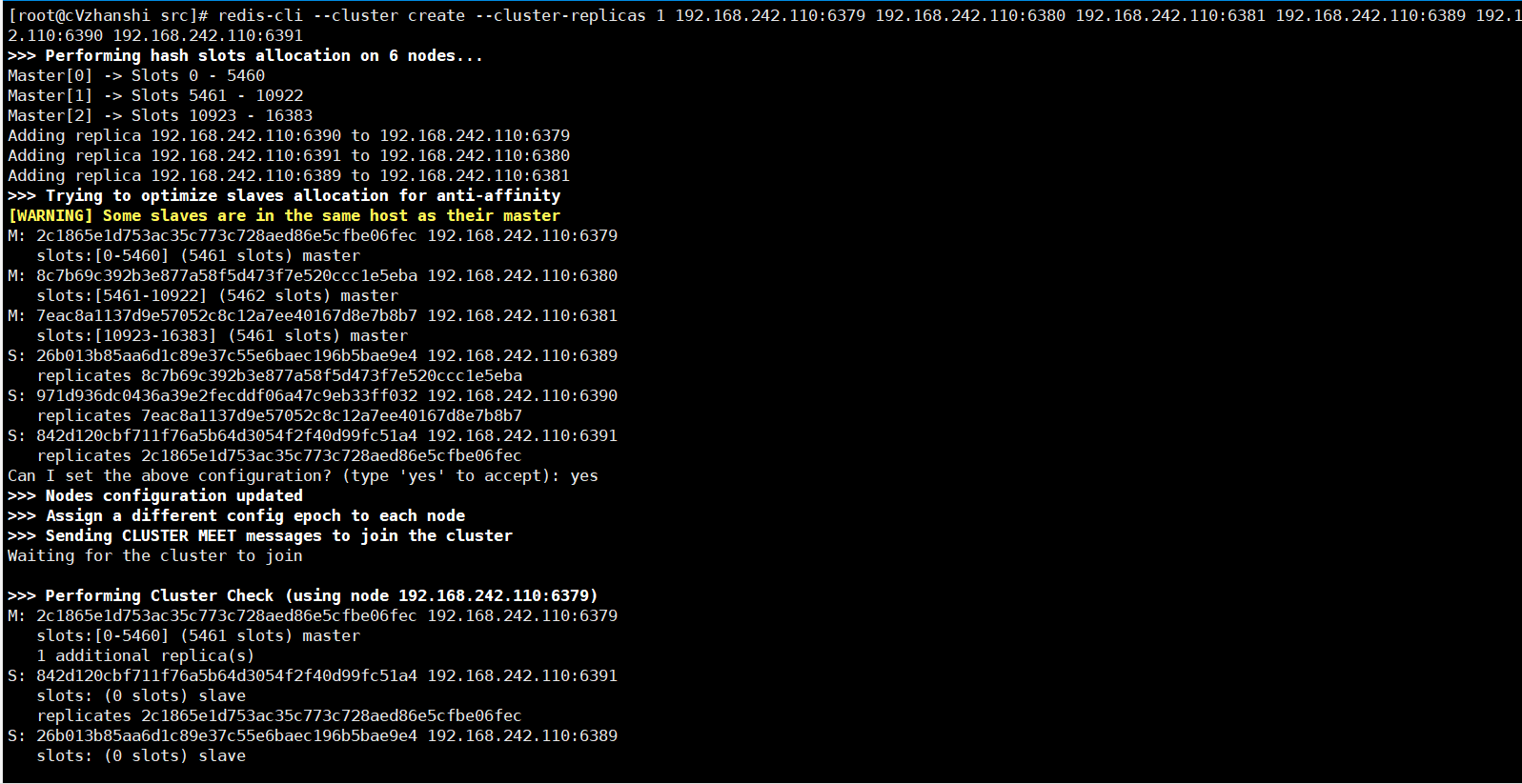

12.2 cluster construction

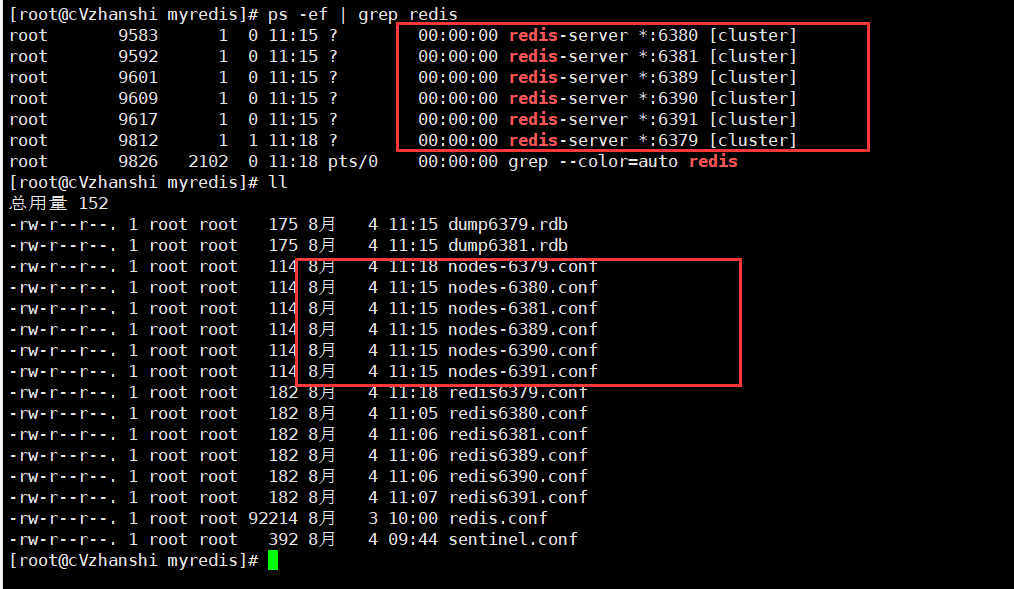

Construction results: six examples were made, 637963806381

638963906391 upper and lower corresponding master-slave

- Delete all persistent files rdb or aof in the folder

- Create six new configuration files with the following contents: (except for the different port numbers, the others are the same)

include /myredis/redis.conf pidfile "/var/run/redis_6391.pid" port 6391 dbfilename "dump6391.rdb" cluster-enabled yes cluster-config-file nodes-6391.conf cluster-node-timeout 15000

Cluster enabled yes turns on cluster mode

cluster-config-file nodes-6379.conf set node configuration file name

Cluster node timeout 15000 sets the node loss time. After that time (MS), the cluster will automatically switch between master and slave.

Where:% s/6379/6380 is the replacement command of vim

- Start 6 services

Make sure nodes XXXX Conf generation

- Combine six nodes into a cluster

Before combining, make sure that after all redis instances are started, nodes XXXX Conf files are generated normally

Go to the src directory of redis first

cd /opt/redis-6.2.1/src

Run the integration cluster command

redis-cli --cluster create --cluster-replicas 1 192.168.242.110:6379 192.168.242.110:6380 192.168.242.110:6381 192.168.242.110:6389 192.168.242.110:6390 192.168.242.110:6391

Note: ip must be real ip, not localhost or 127.0 zero point one

-- replicas 1 configures the cluster in the simplest way, one host and one slave, exactly three groups.

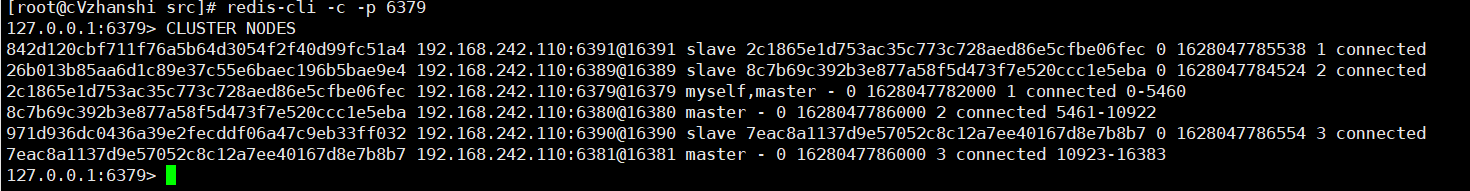

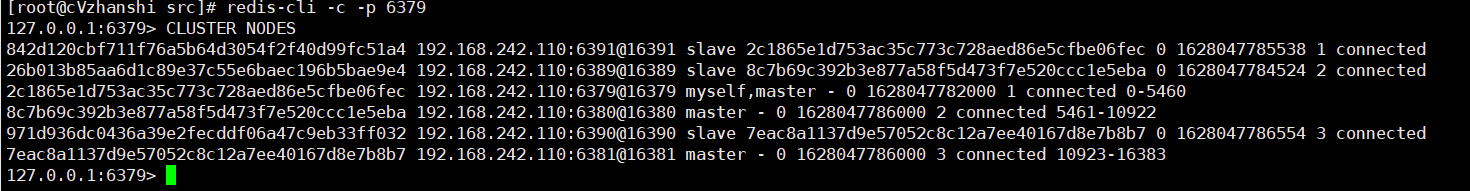

- Check whether the integration is successful

# Connect to Redis redis-cli -c -p 6379 # View cluster information cluster nodes

12.3 cluster operation and fault recovery

12.3. 1. Cluster operation

- View cluster information

cluster nodes

- How does redis cluster allocate these six nodes

A cluster must have at least three primary nodes.

The option -- cluster replicas 1 indicates that we want to create a slave node for each master node in the cluster.

The allocation principle shall try to ensure that each master database runs at different IP addresses, and each slave database and master database are not at the same IP address.

- What are slots?

After running the integration cluster command, "[OK] All 16384 slots covered" will appear

Note: a Redis cluster contains 16384 hash slot s. Each key in the database belongs to one of the 16384 slots. The cluster uses the formula CRC16(key)% 16384 to calculate which slot the key belongs to The CRC16(key) statement is used to calculate the CRC16 checksum of the key.

Each node in the cluster is responsible for processing a portion of the slots. For example, if a cluster can have a master node, where:

Node A handles slots 0 through 5460.

Node B handles slots 5461 to 10922.

Node C handles slots 10923 to 16383.

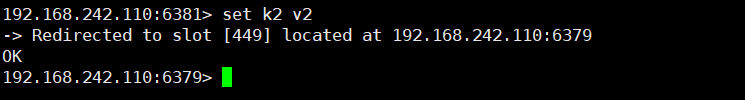

- Enter values in the cluster

Note: when using mset to set multiple values at the same time, you need to put these keys in the same group, otherwise an error will be reported. You can define the concept of group through {}, so that the key value pairs with the same content in {} in the key can be placed in a slot

- Query values in the cluster

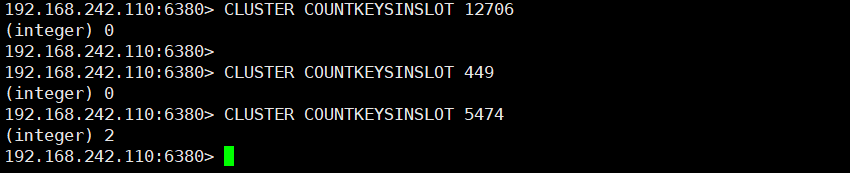

CLUSTER KEYSLOT k1 # Query the slot value of k1

CLUSTER COUNTKEYSINSLOT 12706 # Check the number of key s in the specified slot. Note that it can only succeed on the host where the slot value is located. For example, slot 12706 is on the host of port 6381, but the query fails on other ports

CLUSTER GETKEYSINSLOT 5474 2 # Returns the specified number of key s for the specified slot

12.3. 2 fault recovery

If the master node goes offline? Can the slave node be automatically promoted to the master node? Note: 15 seconds timeout

What happens to the master-slave relationship after the master node is restored? When the master node comes back, it becomes a slave.

If all the master and slave nodes in a slot are down, can the redis service continue?

If the master and slave of a slot hang up and the cluster require full coverage is yes, then the whole cluster hangs up

If the master and slave of a slot hang up and the cluster require full coverage is no, the data of the slot cannot be used or stored.

redis. Parameter cluster require full coverage in conf

12.3. 3 Jedis development of cluster

Even if the host is not connected, the cluster will automatically switch the host storage. Host write, slave read.

No centralized master-slave cluster. Data written from any host can be read from other hosts.

/**

* @author cVzhanshi

* @create 2021-08-05 11:58

*/

public class JedisClusterTest {

public static void main(String[] args) {

HostAndPort hostAndPort = new HostAndPort("192.168.242.110", 6381);

JedisCluster jedisCluster = new JedisCluster(hostAndPort);

jedisCluster.set("k5","v5");

String k5 = jedisCluster.get("k5");

System.out.println(k5);

}

}

12.4 advantages and disadvantages of redis

benefit

- Realize capacity expansion

- Sharing pressure

- The centerless configuration is relatively simple

Insufficient

- Multi key operations are not supported

- Multi key Redis transactions are not supported. lua script is not supported

- Due to the late emergence of the cluster scheme, many companies have adopted other cluster schemes, while the proxy or client fragmentation scheme needs overall migration rather than gradual transition to redis cluster, which is more complex.