catalogue

Building mirrors and running containers

introduce

Docker and other container technologies significantly simplify the dependency management and portability of software. In this series of articles, we explore Docker Use in machine learning (ML) scenarios.

This series assumes that you are familiar with ML and containerization, especially Docker. Welcome to download Project code.

stay Last article In, we use a conventional Intel/AMD CPU to create a basic container for experiment, training and reasoning. In this section, we will create a container to handle the data with Raspberry Pi Reasoning on ARM processor.

Set Docker on Raspberry Pi

With the official support for Raspberry Pi, the installation of Docker is very simple.

We have successfully tested it on Raspberry Pi 4/400 and Raspberry Pi OS (32-bit) with 4GB RAM and Ubuntu Server 20.04.2 LTS (64 bit).

You can go to the official website Docker website Find detailed installation instructions for any supported operating systems.

The easiest way to perform an installation is to use Convenience script . However, it is not recommended for production environments. Fortunately, "manual" installation is not too complicated.

For the 64 bit Ubuntu server operating system, it looks like this:

$ sudo apt-get update $ sudo apt-get install apt-transport-https ca-certificates curl gnupg $ curl -fsSL https://download.docker.com/linux/ubuntu/gpg | sudo gpg --dearmor -o /usr/share/keyrings/docker-archive-keyring.gpg $ echo \ "deb [arch=arm64 signed-by=/usr/share/keyrings/docker-archive-keyring.gpg] https://download.docker.com/linux/ubuntu $(lsb_release -cs) stable" | sudo tee /etc/apt/sources.list.d/docker.list > /dev/null $ sudo apt-get update $ sudo apt-get install docker-ce docker-ce-cli containerd.io

For different operating system versions, the bold ubuntu and arm64 need to be updated accordingly.

To access the Docker command as a non root user, you should also log out and log in again after execution:

$ sudo usermod -aG docker <your-user-name>

Dockerfile of ARM

Although the basic Python images we used in previous articles can be used for ARM processors, they may not be the best choice. For ARM architecture, similar to Alpine OS, many Python libraries cannot be precompiled and packaged as wheels. They need to be compiled during installation, which can take a long time.

Alternatively, we can rely on Python included in the operating system. This is not something we often do, but there is no harm in using Docker. We only need one Python environment per container. The python version we use will lose some flexibility, but the ability to choose from many compiled system level Python libraries will save us a lot of time and reduce the size of the generated image.

This is why we will use Debian: Buster slim image as our foundation. It comes with Python 3.7 and should be enough for all our purposes because it meets the requirements of all libraries and AI/ML code we will run with it.

After several attempts and adding missing system libraries in the process, we finally got the following Dockerfile to deal with our reasoning:

FROM debian:buster-slim ARG DEBIAN_FRONTEND=noninteractive RUN apt-get update \ && apt-get -y install --no-install-recommends build-essential libhdf5-dev pkg-config protobuf-compiler cython3 \ && apt-get -y install --no-install-recommends python3 python3-dev python3-pip python3-wheel python3-opencv \ && apt-get autoremove -y && apt-get clean -y && rm -rf /var/lib/apt/lists/* RUN pip3 install --no-cache-dir setuptools==54.0.0 RUN pip3 install --no-cache-dir https://github.com/bitsy-ai/tensorflow-arm-bin/releases/download/v2.4.0/tensorflow-2.4.0-cp37-none-linux_aarch64.whl ARG USERNAME=mluser ARG USERID=1000 RUN useradd --system --create-home --shell /bin/bash --uid $USERID $USERNAME USER $USERNAME WORKDIR /home/$USERNAME/app COPY app /home/$USERNAME/app ENTRYPOINT ["python3", "predict.py"]

Note that in this section, we install the python 3-opencv system library and use apt get instead of pip. However, we cannot install NumPy in the same way because the operating system version does not match TensorFlow requirements. Unfortunately, this means that we need to compile NumPy and some other TensorFlow dependencies.

Nevertheless, the main package does not need to be compiled because we use the Raspberry Pi wheel published on GitHub. If you prefer to use 32-bit Raspberry PI OS, you need to update the TensorFlow link in Dockerfile accordingly.

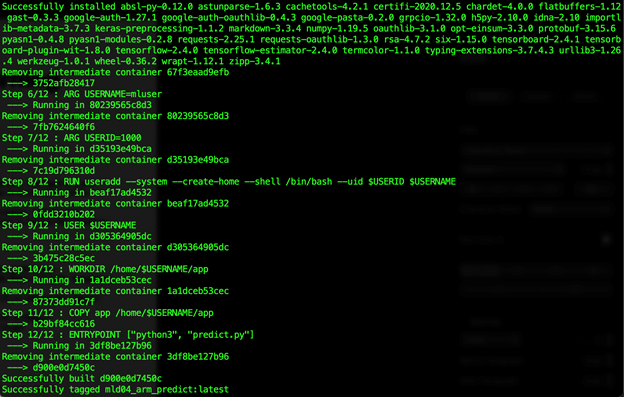

Building mirrors and running containers

after Download project code (with the training model and sample data), we can build our image:

$ docker build --build-arg USERID=$(id -u) -t mld04_arm_predict .

This operation may take more than 30 minutes to complete (at least on Raspberry Pi 4/400). It's not lightning fast anyway, but if many libraries need to be compiled, it may take several times as long.

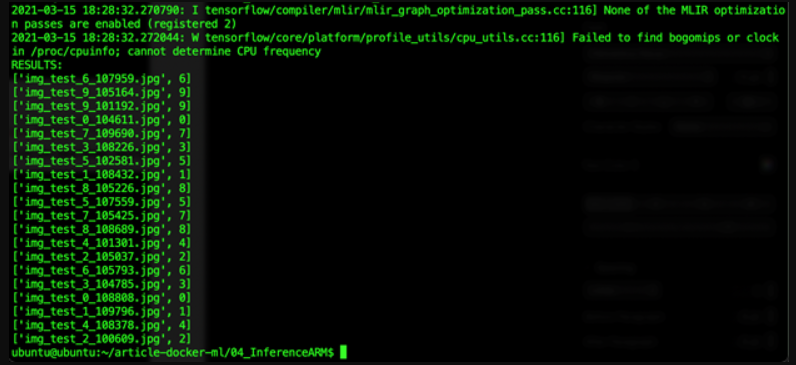

Finally, we can run our prediction on the "edge":

$ docker run -v $(pwd)/data:/home/mluser/data --rm --user $(id -u):$(id -g) mld04_arm_predict --images_path /home/mluser/data/test_mnist_images/*.jpg

Similar to the previous article, we only map data folders because applications and models are stored in containers.

The expected results are as follows:

summary

We have successfully built and run TensorFlow prediction on Raspberry Pi. By relying on the precompiled system Python library, we sacrifice some flexibility. However, the reduction in image build time and final size is well worth it.

In the last article in this series, we will return to Intel / AMD CPUs. This time, we will use GPU to speed up our calculations.

https://www.codeproject.com/Articles/5300727/Running-AI-Models-in-Docker-Containers-on-ARM-Devi