1 Theory

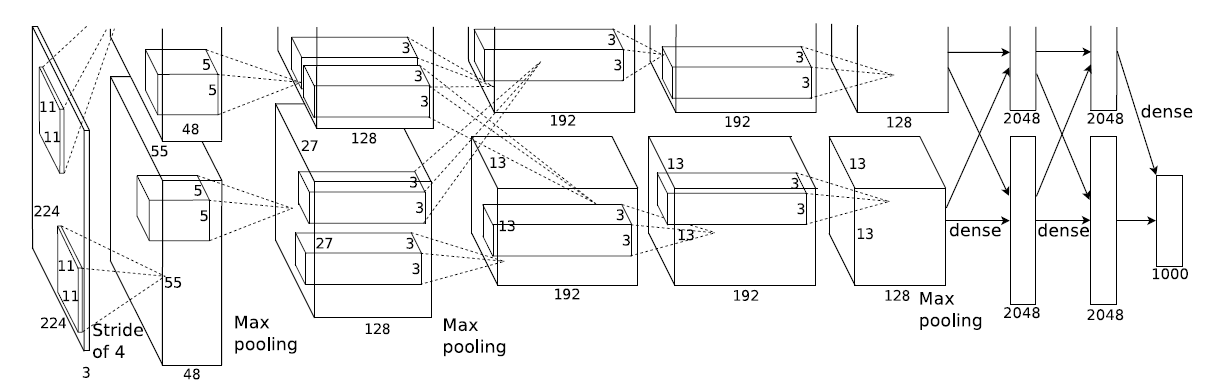

AlexNet is an 8-layer deep convolution network, which is mainly applied to images.

Innovation of the article:

- In view of too little data, an amplification method is proposed, such as image clipping / flipping, etc

- For the activation function, ReLU is used instead of Sigmoid to overcome the difficulty of training Sigmoid function when it is close to 0 and 1

- For network over fitting, Dropout is proposed

- For the model structure, the convolution kernel size / network layers / step size are changed

Model structure: 5-layer convolution, and the convolution kernel of the first layer is 11 ∗ 11 11*11 11 * 11. The second floor is 5 ∗ 5 5*5 Except for 5 * 5, the other three floors are 3 ∗ 3 3*3 3∗3.

Specific structure:

Layer 1: convolution layer

Convolution kernel size 11 ∗ 11 11*11 11 * 11, the number of input channels depends on the input image, the number of output channels is 96, and the step size is 4.

The pool layer window size is 3 ∗ 3 3*3 3 * 3 in steps of 2.

Layer 2: convolution layer

Convolution kernel size 5 ∗ 5 5*5 5 * 5, the number of input channels is 96, the number of output channels is 256, and the step size is 2.

The pool layer window size is 3 ∗ 3 3*3 3 * 3 in steps of 2.

Layer 3: convolution layer

Convolution kernel size 3 ∗ 3 3*3 3 * 3, the number of input channels is 256, the number of output channels is 384, and the step size is 1.

Layer 4: convolution layer

Convolution kernel size 3 ∗ 3 3*3 3 * 3, the number of input channels is 384, the number of output channels is 384, and the step size is 1.

Layer 5: convolution layer

Convolution kernel size 3 ∗ 3 3*3 3 * 3, the number of input channels is 384, the number of output channels is 256, and the step size is 1.

The pool layer window size is 3 ∗ 3 3*3 3 * 3 in steps of 2.

Layer 6: full connection layer

The input size is the output of the previous layer, and the output size is 4096.

Dropout probability is 0.5.

Seventh floor: full connection floor

The input size is 4096 and the output size is 4096.

Dropout probability is 0.5.

Eighth floor: full connection floor

The input size is 4096 and the output size is the number of categories.

Note: it should be noted that the first two of the five convolution layers will be followed by a pool layer, while there is no pool layer behind the third and fourth convolution layers. Instead, a pool layer is added after three consecutive convolution layers of 3, 4 and 5.

2 Practice

# ImageNet Classification with Deep Convolutional Neural Networks

import torch.nn as nn

import torch

class AlexNet(nn.Module):

def __init__(self, input_channel, n_classes):

super(AlexNet, self).__init__()

self.conv1 = nn.Sequential(

# transforming (bsize x 1 x 224 x 224) to (bsize x 96 x 54 x 54)

# From floor((n_h - k_s + p + s)/s), floor((224 - 11 + 3 + 4) / 4) => floor(219/4) => floor(55.5) => 55

nn.Conv2d(input_channel, 96, kernel_size=11, stride=4, padding=3), # (batch_size * 96 * 55 * 55)

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3, stride=2)) # (batch_size * 96 * 27 * 27)

self.conv2 = nn.Sequential(

nn.Conv2d(96, 256, kernel_size=5, padding=2), # (batch_size * 256 * 27 * 27)

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3, stride=2)) # (batch_size * 256 * 13 * 13)

self.conv3 = nn.Sequential(

nn.Conv2d(256, 384, kernel_size=3, padding=1), # (batch_size * 384 * 13 * 13)

nn.ReLU(inplace=True),

nn.Conv2d(384, 384, kernel_size=3, padding=1), # (batch_size * 384 * 13 * 13)

nn.ReLU(inplace=True),

nn.Conv2d(384, 256, kernel_size=3, padding=1), # (batch_size * 256 * 13 * 13)

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3, stride=2), # (batch_size * 256 * 6 * 6)

nn.Flatten())

self.fc = nn.Sequential(

nn.Linear(256 * 6 * 6, 4096), # (batch_size * 4096)

nn.ReLU(inplace=True),

nn.Dropout(p=0.5),

nn.Linear(4096, 4096), # (batch_size * 4096)

nn.ReLU(inplace=True),

nn.Dropout(p=0.5),

nn.Linear(4096, n_classes)) # (batch_size * 10)

self.conv1.apply(self.init_weights)

self.conv2.apply(self.init_weights)

self.conv3.apply(self.init_weights)

self.fc.apply(self.init_weights)

def init_weights(self, layer):

if type(layer) == nn.Linear or type(layer) == nn.Conv2d:

nn.init.xavier_uniform_(layer.weight)

def forward(self, x):

out = self.conv1(x)

out = self.conv2(out)

out = self.conv3(out)

out = self.fc(out)

return out

if __name__ == '__main__':

data = torch.randn(size=(16, 3, 224, 224))

model = AlexNet(input_channel=3, n_classes=10)

classes = model(data)

print(classes.size()) # (16,10)

reference resources: https://zhuanlan.zhihu.com/p/86447716