Ancient poetry is the treasure of Chinese culture. I remember that when I was an Exchange Student in South Korea, I saw that they learned our ancient poetry, including Chinese and translation versions. I was proud of myself and even remembered some familiar poems at some time.

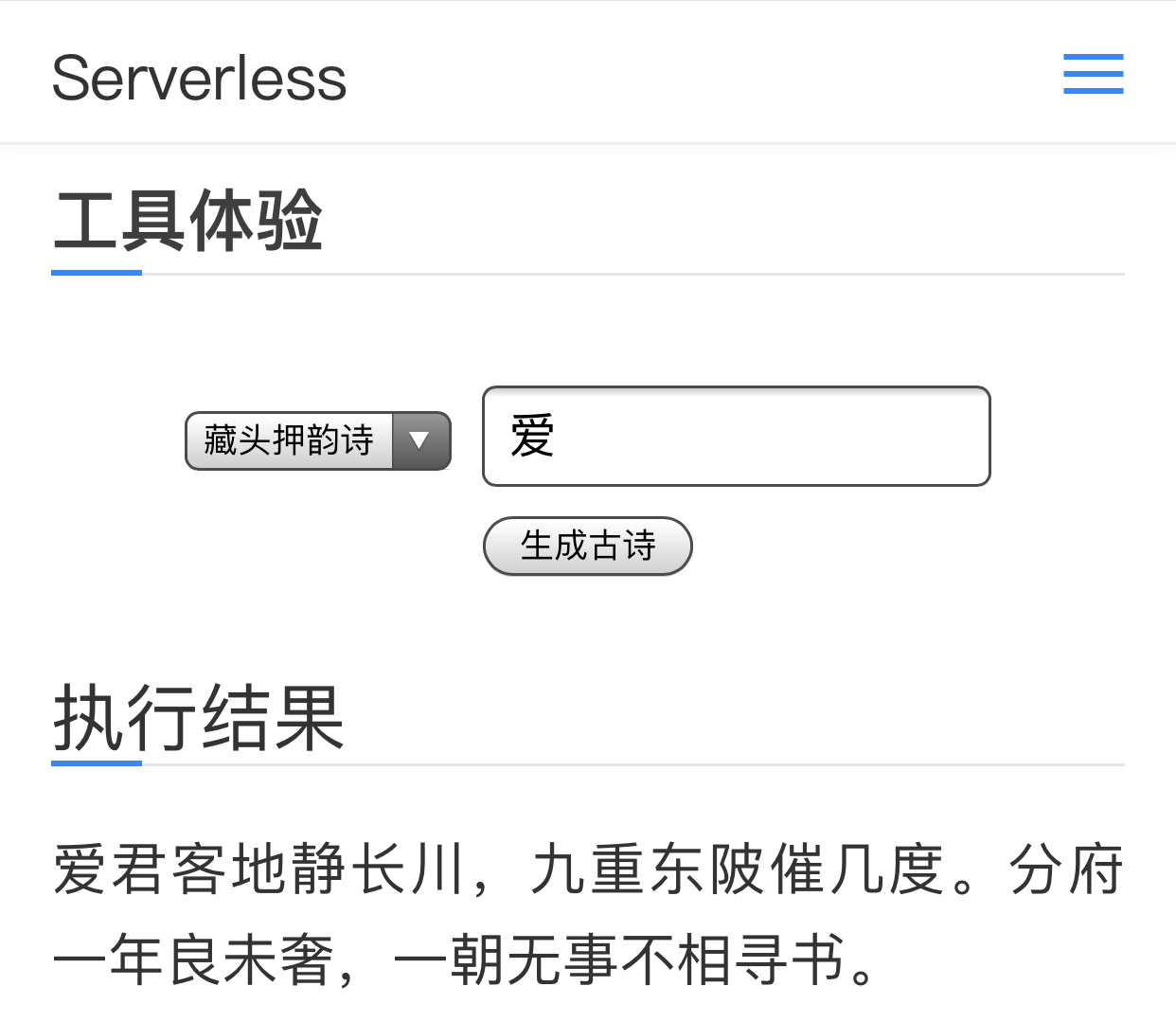

In this paper, we will generate some ancient poetry for us through in-depth learning, and deploy the model to Serverless architecture to implement the generation API of ancient poetry based on Serverless.

Project construction

The generation of ancient poetry is actually text generation, or generative text. For deep learning based text generation, the most entry-level reading includes Andrej karpath's blog. He uses examples to illustrate vividly how char RNN (character based recurrent neural network) can be used to learn from text data sets, and then automatically generate decent text.

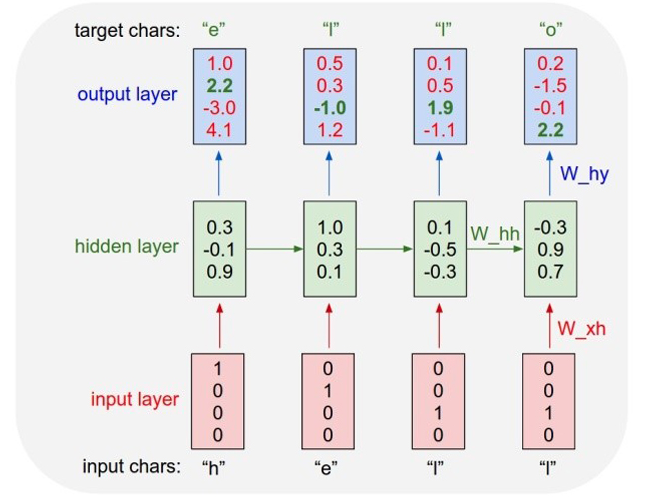

The diagram above shows the principle of Char-RNN. For example, to let the model learn to write "hello", the input and output layers of Char-RNN are all in characters. Input "h" to output "e"; input "e" to output subsequent "l".

In the input layer, we can use a vector with only one element as 1 to encode different characters. For example, "h" is encoded as "1000", "e" as "0100", and "l" as "0010". The learning goal of using RNN is to make the next character generated as consistent as possible with the target output in the training sample. In the example of Figure 1, the vector of the next character predicted by the first two characters and the third input "l" is < 0.1, 0.5, 1.9, - 1.1 >. The largest dimension is the third dimension, and the corresponding character is "0010", which is exactly "l". This is a correct prediction. But the output vector obtained from the first "h" is the largest in the fourth dimension, and the corresponding is not "e", so there is a cost.

The process of learning is to constantly reduce this cost. The learned model can constantly predict the next character for any input character, so that sentences or paragraphs can be generated.

The project construction of this article refers to the existing projects of Github: https://github.com/norybaby/poet

Through the Clone code, and install the related dependencies:

pip3 install tensorflow==1.14 word2vec numpy

Through training:

python3 train.py

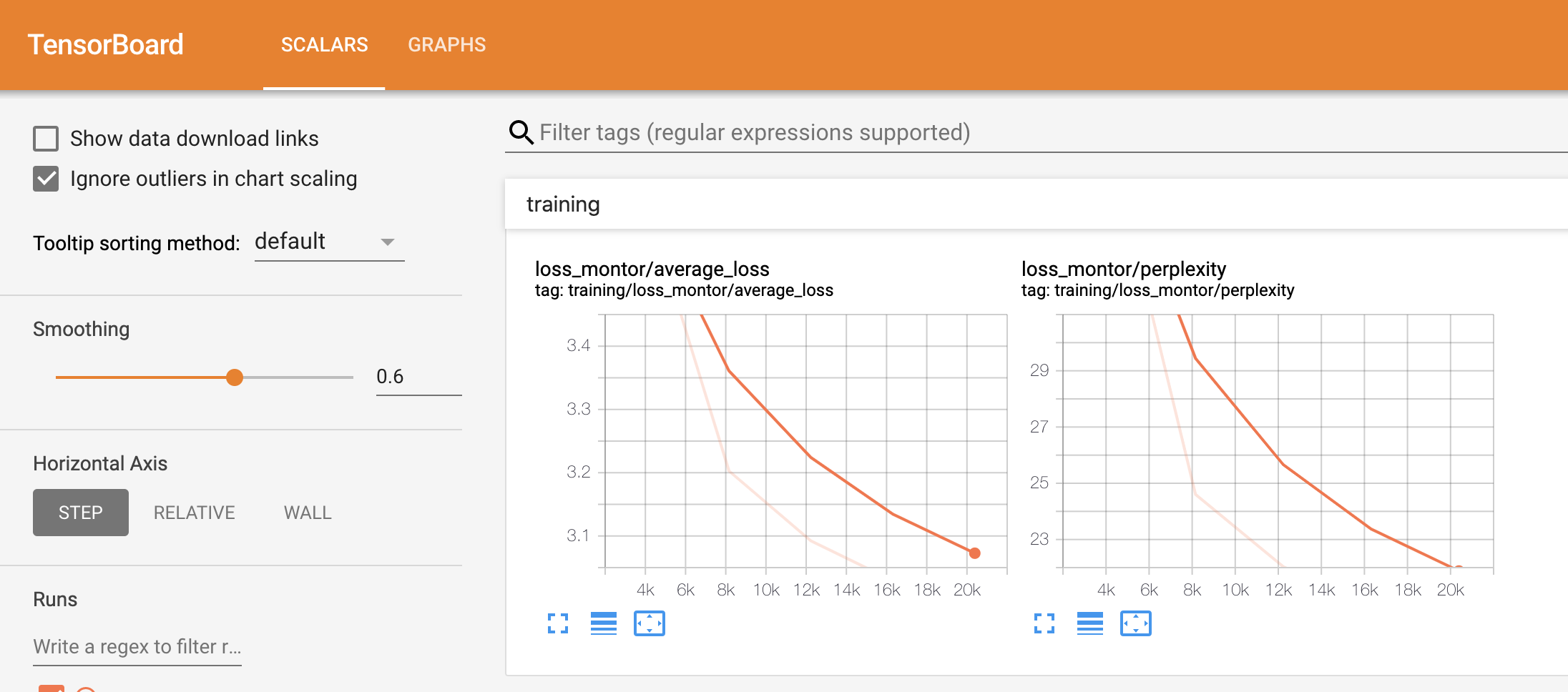

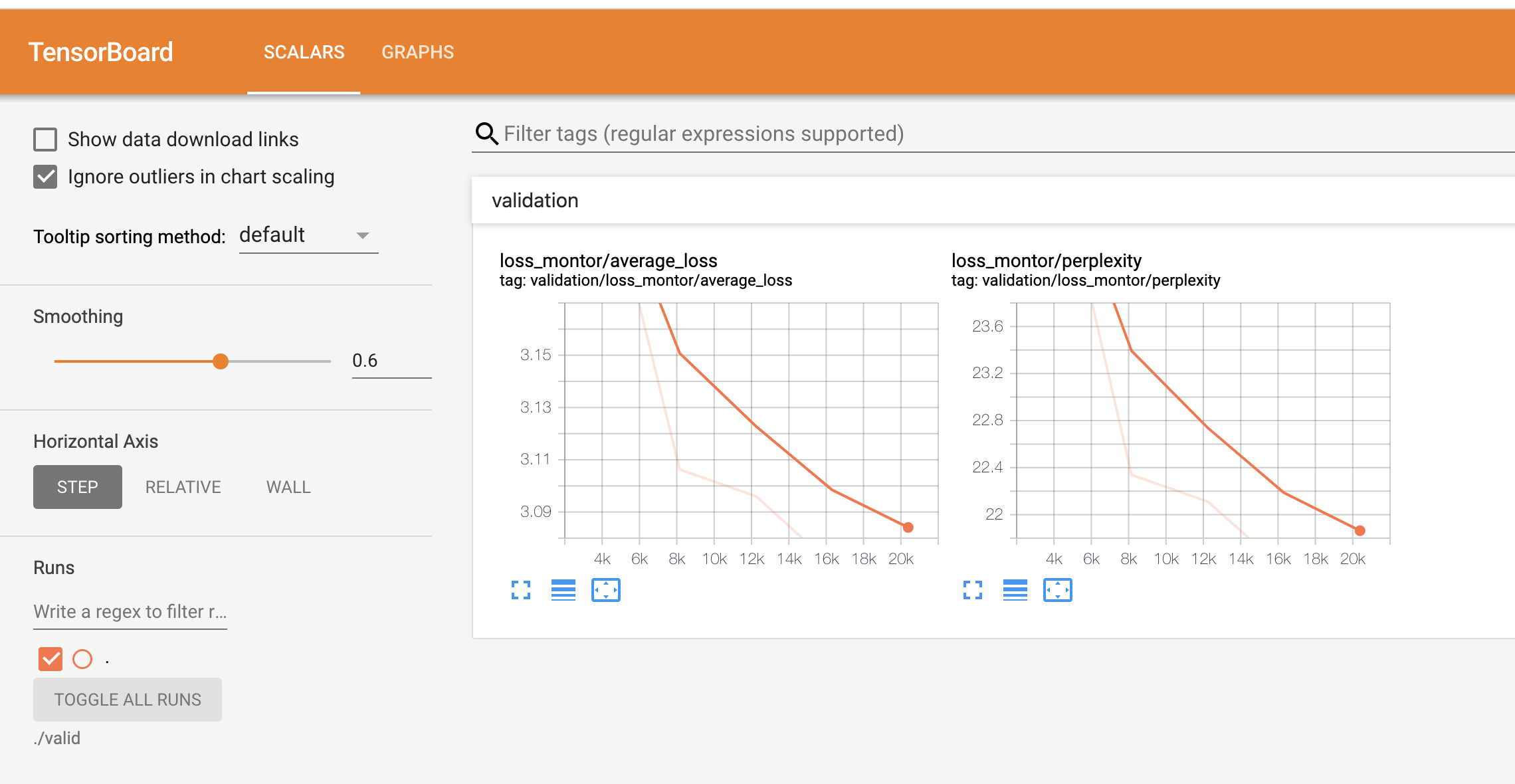

You can see the results:

Multiple models will be generated in the output_ Under the poem folder, we just need to keep the best, such as the json file generated after my training:

{ "best_model": "output_poem/best_model/model-20390", "best_valid_ppl": 21.441762924194336, "latest_model": "output_poem/save_model/model-20390", "params": { "batch_size": 16, "cell_type": "lstm", "dropout": 0.0, "embedding_size": 128, "hidden_size": 128, "input_dropout": 0.0, "learning_rate": 0.005, "max_grad_norm": 5.0, "num_layers": 2, "num_unrollings": 64 }, "test_ppl": 25.83984375 }

At this point, I just need to save the output_poem/best_model/model-20390 model is enough.

Deploy Online

Under the project directory, the installation must depend on:

pip3 install word2vec numpy -t ./

Because Tensorflow and other packages are built-in packages of Tencent cloud functions, there is no need to install them here. In addition, numpy package needs to be packaged in CentOS + Python 3.6 environment. You can also pack it with the gadget you made earlier: https://www.serverlesschina.com/35.html

When finished, write the function entry file:

import uuid, json from write_poem import WritePoem, start_model writer = start_model() def return_msg(error, msg): return_data = { "uuid": str(uuid.uuid1()), "error": error, "message": msg } print(return_data) return return_data def main_handler(event, context): # type # 1: Freedom # 2: Rhyming # 3: Hidden head rhyme # 4: Tibetan rhyme style = json.loads(event["body"])["style"] content = json.loads(event["body"]).get("content", None) if style in '34' and not content: return return_msg(True, "Please enter content parameter") if style == '1': return return_msg(False, writer.free_verse()) elif style == '2': return return_msg(False, writer.rhyme_verse()) elif style == '3': return return_msg(False, writer.cangtou(content)) elif style == '4': return return_msg(False, writer.hide_words(content)) else: return return_msg(True, "Please enter the correct style parameter")

At the same time, you need to prepare the Yaml file:

getUserIp: component: "@serverless/tencent-scf" inputs: name: autoPoem codeUri: ./ exclude: - .gitignore - .git/** - .serverless - .env handler: index.main_handler runtime: Python3.6 region: ap-beijing description: Automatic ancient poetry writing namespace: serverless_tools memorySize: 512 timeout: 10 events: - apigw: name: serverless parameters: serviceId: service-8d3fi753 protocols: - http - https environment: release endpoints: - path: /auto/poem description: Automatic ancient poetry writing method: POST enableCORS: true

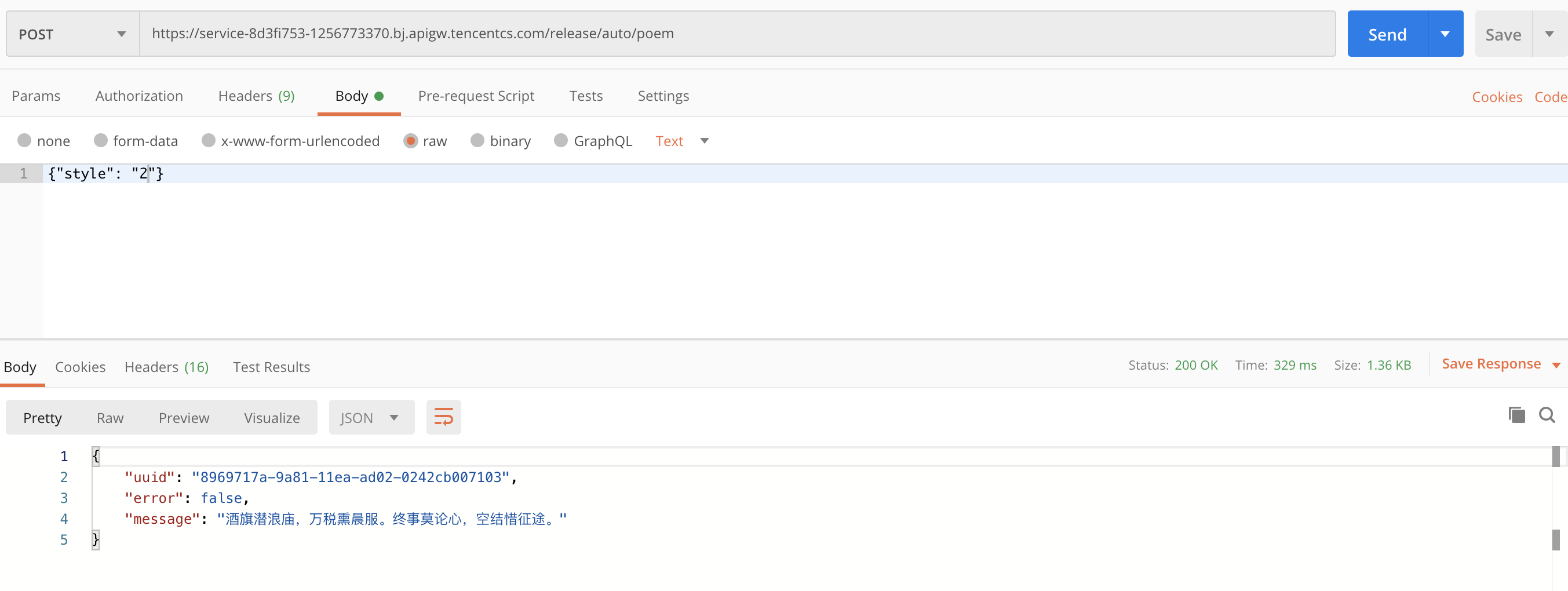

At this point, we can deploy the project through the Serverless Framework CLI. After deployment, we can test our interface through PostMan:

summary

In this paper, through the existing deep learning projects, training in the local area, saving the model, and then deploying the project on Tencent cloud function, through the linkage with API gateway, we have realized an API based on deep learning for ancient poetry writing.

One More Thing

What can you do in three seconds? Take a sip, read an email, or - deploy a complete Serverless Application?

Copy link to PC browser: https://serverless.cloud.tencent.com/deploy/express

Deploy in 3 seconds and experience the fastest Serverless HTTP Practical development!

Transfer gate:

- GitHub: github.com/serverless

- Official website: serverless.com

Welcome to: Serverless Chinese network , you can Best practices Experience more about Serverless application development!

Recommended reading: Serverless architecture: from principle, design to project implementation